- The paper demonstrates that composable chain-of-thought training data improves zero-shot reasoning in LLMs.

- It details a methodology using prefix-suffix tagging and proxy prefix sequences to augment atomic reasoning tasks.

- Results show that multitask learning combined with model merging enhances performance even with limited supervision.

Learning Composable Chains-of-Thought

Introduction

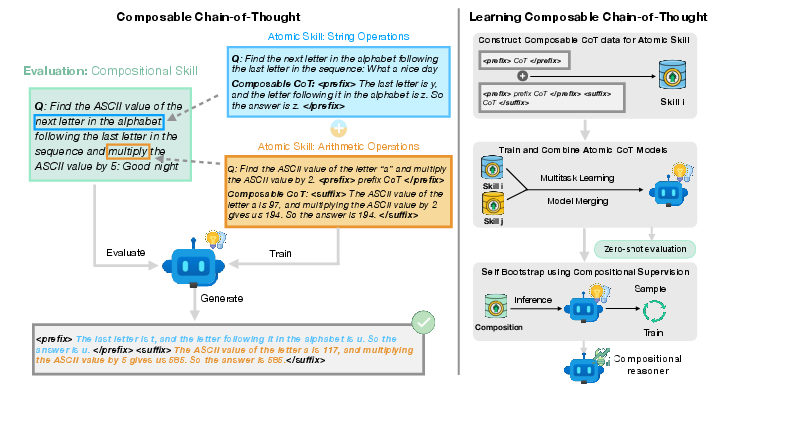

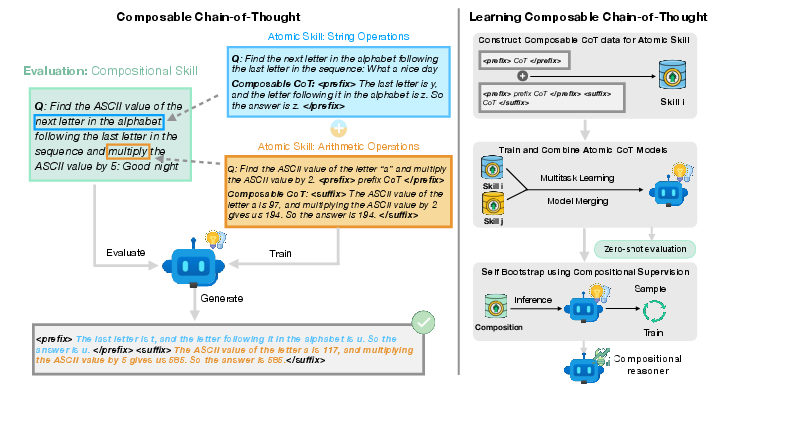

The study introduces an approach to train LLMs for enhanced reasoning capabilities by focusing on chain-of-thought (CoT) frameworks. The key issue at hand is the limited generalization potential of LLMs trained on specific CoT data. Aiming to resolve this, the work proposes a data augmentation strategy that constructs Composable CoT training data, thereby enabling the combination of atomic reasoning tasks into more complex, compositional tasks.

The central premise is that, while training on atomic CoT tasks generally limits compositionality, modifying CoT formats can facilitate improved generalization. The experiments revealed that models trained with this compositional format outperformed standard multitask learning approaches, particularly in zero-shot compositional task settings.

Figure 1: Pipeline illustrating the construction and application of Composable CoT data in LLM training.

Methodology

Composable CoT Construction

The methodology revolves around altering the CoT format of training data. For any given atomic task, the CoT data includes a prefix and a suffix that simulate how tasks combine compositionally. This structured augmentation involves:

- Chain-of-Thought Tags: Creating distinct tags for prefix and suffix CoTs, which allow the model to comprehend and generate atomic CoTs in a compositional manner.

- Proxy Prefix CoTs: Random sequences form proxy prefixes to strengthen generalization across unseen combinations during inference.

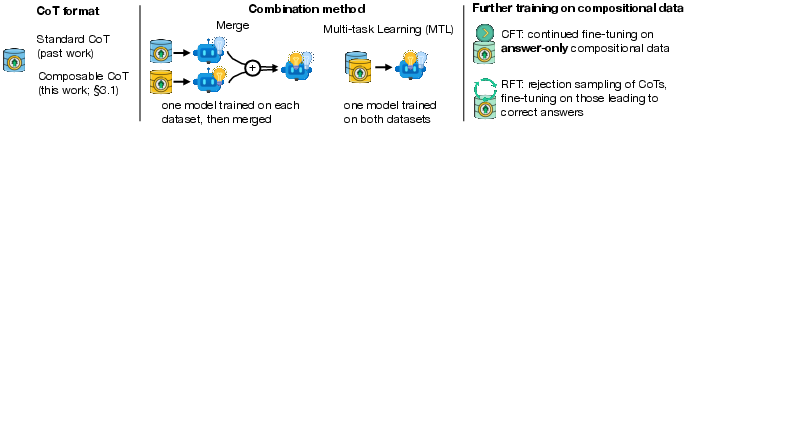

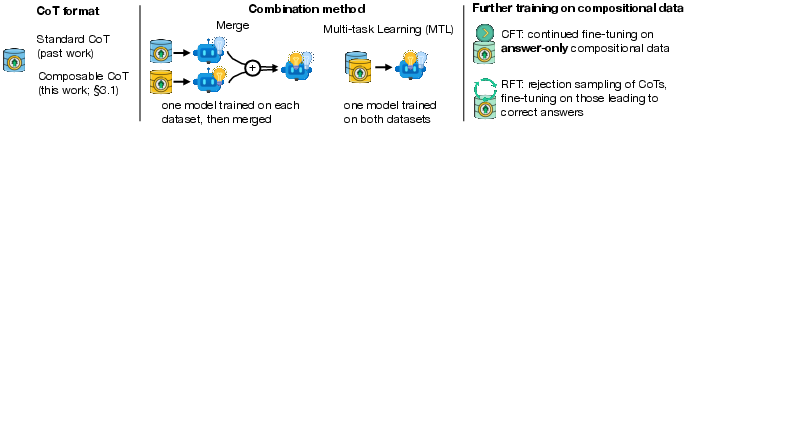

- Multitask Learning and Model Merging: Training LLMs using augmented data from multiple atomic tasks, and leveraging model merging to synthesize a model that exhibits compositional reasoning.

Results and Analysis

Zero-Shot Compositional Generalization

The approach yielded significant improvements in zero-shot settings. It was found that ComposableCoT models surpassed their StandardCoT counterparts in tasks involving compositional reasoning. Moreover, ComposableCoT models sometimes rivaled the performance of models trained directly on compositional datasets.

The experimental setup confirmed that task merging and multi-task learning both have merits in fostering compositional generalization, contingent upon the atomic tasks in question. Notably, merging sometimes proved unstable, as evidenced by discrepancies in compositional task performance.

Figure 2: Illustration of model architecture and training methodologies employed in the study.

When evaluated with limited compositional supervision, the ComposableCoT models further demonstrated superior adaptability. Aided by rejection sampling fine-tuning, these models achieved enhanced performance in compositional tasks, thereby establishing a benchmark for training efficiency given data constraints.

Discussion

The findings resonate with the notion that decomposability and recombination of learned tasks enhance the reasoning spectrum of LLMs. By adjusting the training protocols to assimilate compositional reasoning, LLMs can potentially extend their applicability beyond in-distribution tasks, hence bridging the gap toward robust and efficient AI systems.

Moreover, the study highlights the nuanced decision-making involved in choosing between multitask learning and model merging, contingent upon the tasks in consideration.

Conclusion

The study presents a compelling method to improve LLMs' reasoning capabilities via Composable CoT training. While challenges remain regarding the scalability and complexity of tasks beyond mere pairwise compositions, the proposed framework paves the way for more intelligent design of reasoning models. Future work can explore such frameworks' applicability on a wider scale, particularly in dynamic real-world scenarios.