- The paper introduces an SSH honeypot that applies LLM-driven dynamic response generation to simulate realistic attacker interactions.

- The paper benchmarks 13 LLM variants using metrics like cosine similarity and BLEU score to evaluate trade-offs in accuracy, latency, and memory overhead.

- The findings highlight that LLM-based dynamic honeypots can capture richer threat intelligence while facing challenges such as computational overhead and output hallucination.

"LLMHoney: A Real-Time SSH Honeypot with LLM-Driven Dynamic Response Generation" (2509.01463)

Introduction

The paper introduces LLMHoney, an innovative SSH honeypot leveraging LLMs to simulate dynamic and contextually appropriate responses to attacker commands. Traditional honeypots often utilize static responses that can be easily identified by skilled adversaries, thereby limiting their effectiveness in engaging sophisticated attackers. By integrating LLMs into its architecture, LLMHoney aims to offer high-interaction experiences without the risks associated with running real operating systems. This approach allows for more realistic engagement by adapting to unexpected commands while maintaining system consistency through the use of a stateful virtual filesystem.

System Architecture

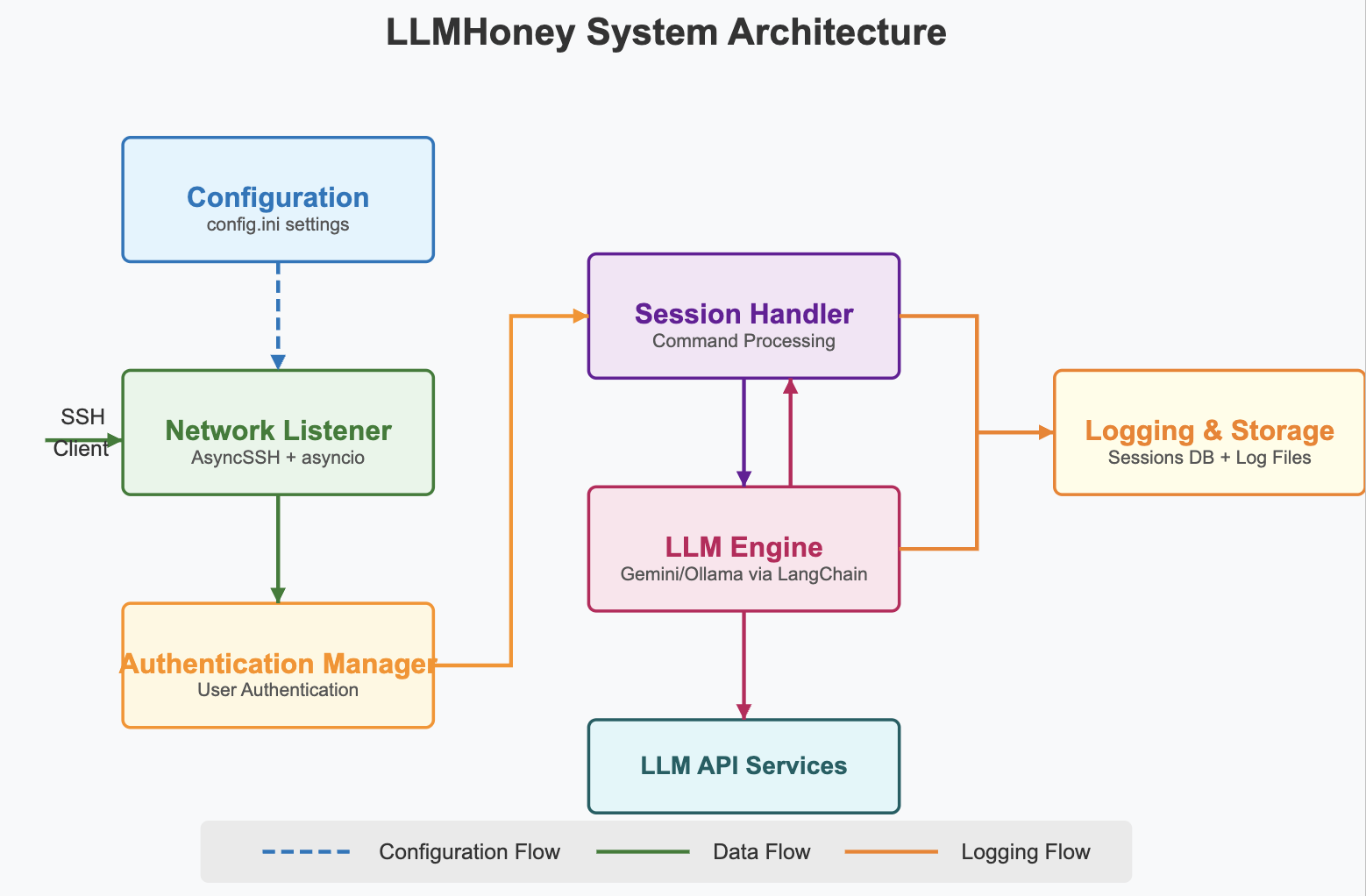

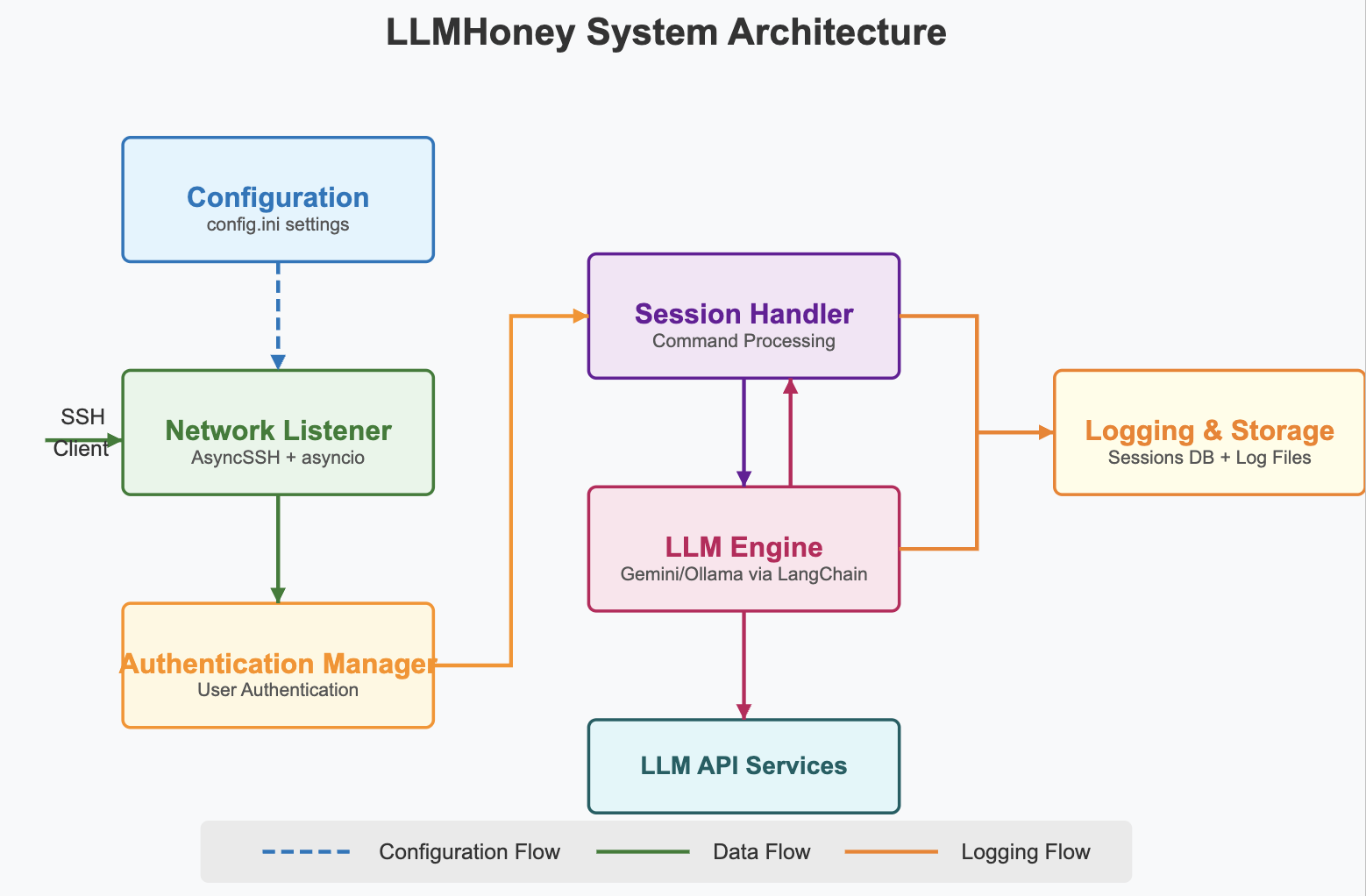

LLMHoney's architecture comprises several components that work in synergy to ensure seamless interaction and efficient response generation.

Figure 1: High-level architecture of LLMHoney.

The core components include configuration settings, a network listener to manage SSH connections, an authentication manager for handling user credentials, a session handler integrated with an LLM engine for dynamic command processing, and logging mechanisms for session data storage. The incorporation of LLMs into the command-processing loop enables real-time generation of responses, significantly enhancing the interactive capabilities of the honeypot compared to traditional, script-based systems.

Evaluation Methodology

The paper outlines a comprehensive evaluation of LLMHoney, detailing the methodology used to assess performance across various metrics including accuracy, latency, and memory overhead.

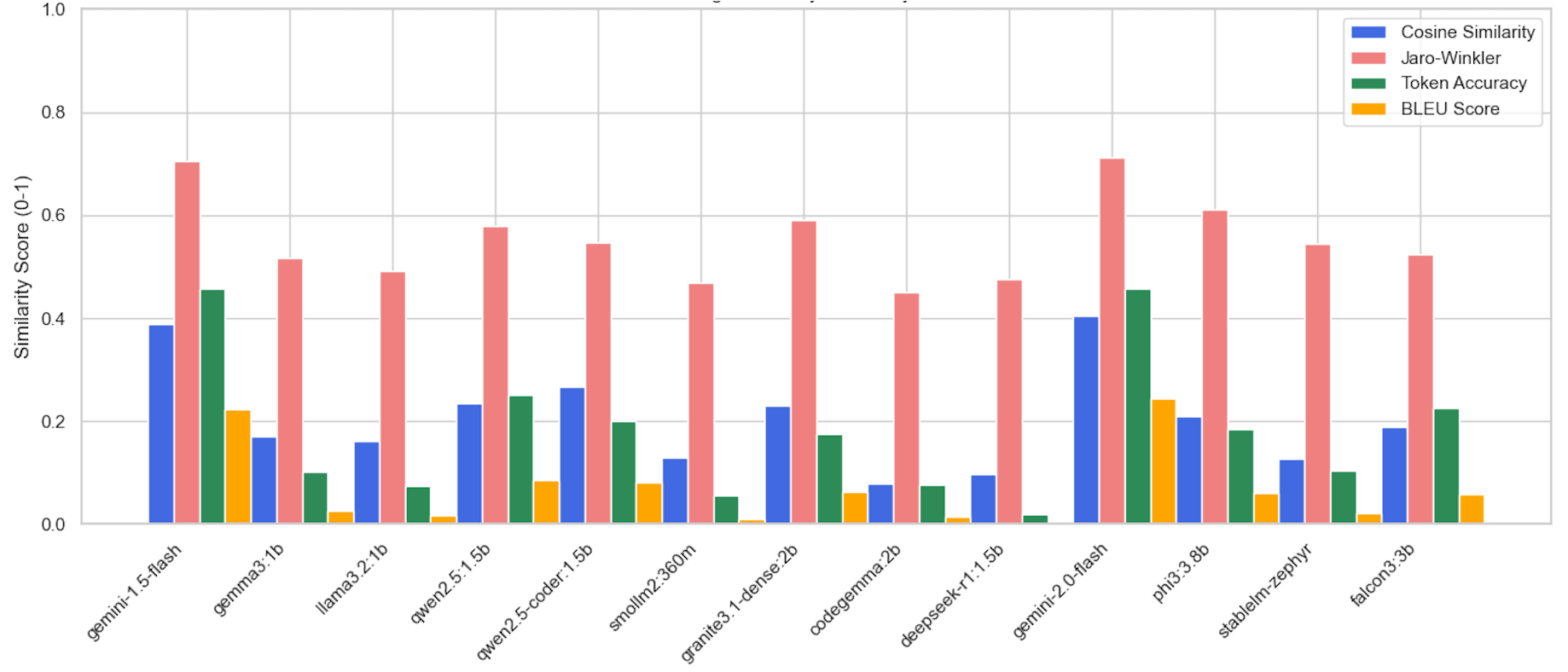

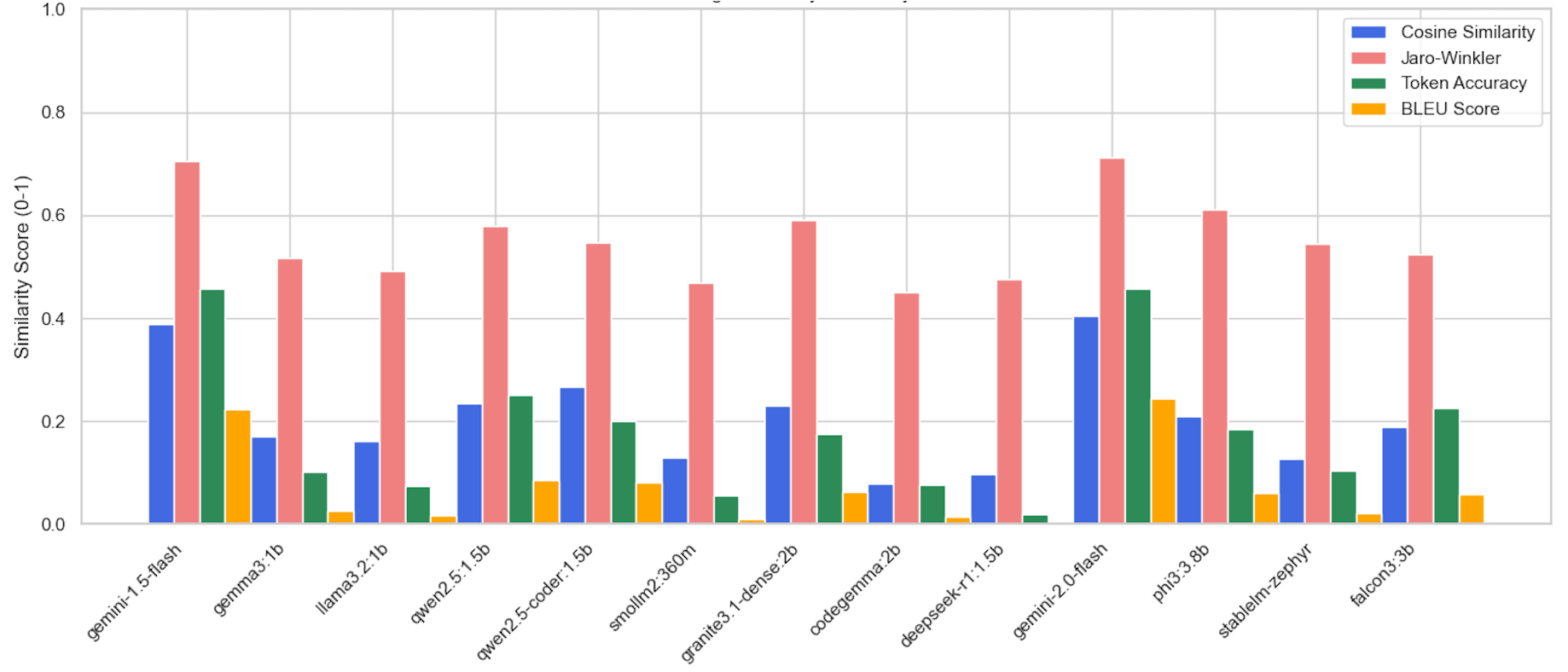

Figure 2: Average similarity metrics for each model: Cosine Similarity, Jaro-Winkler, Token Accuracy, and BLEU Score.

Using a testbed of 138 Linux commands, LLMHoney was benchmarked with 13 LLM variants ranging from 0.36B to 3.8B parameters. The evaluation pipeline involved measuring exact string match accuracy, token-level accuracy, cosine similarity, Jaro-Winkler similarity, Levenshtein distance, and BLEU scores against ground-truth outputs. The models were tested for latency performance, recording round-trip times and memory usage to understand the trade-offs between command realism and computational resources.

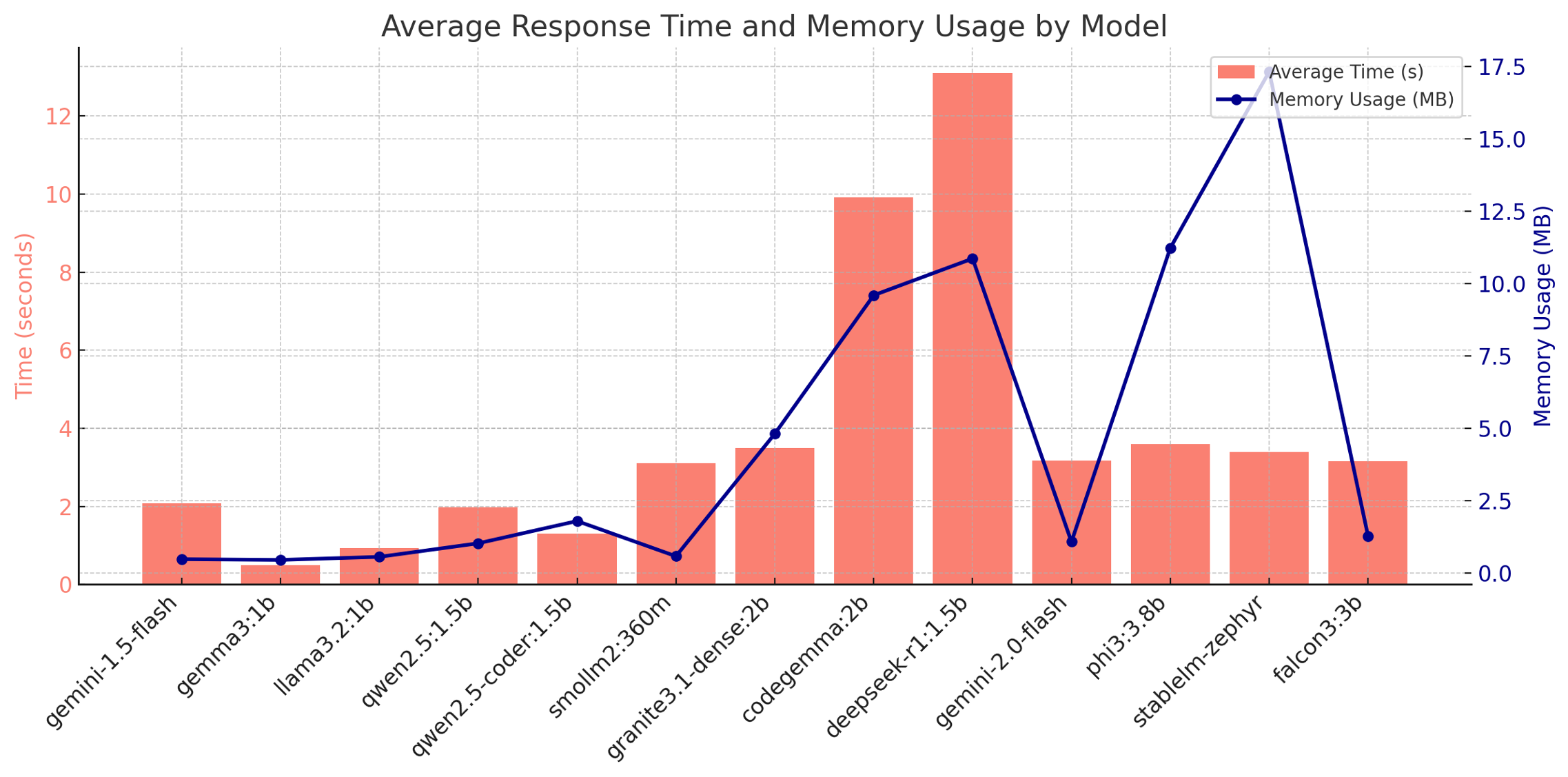

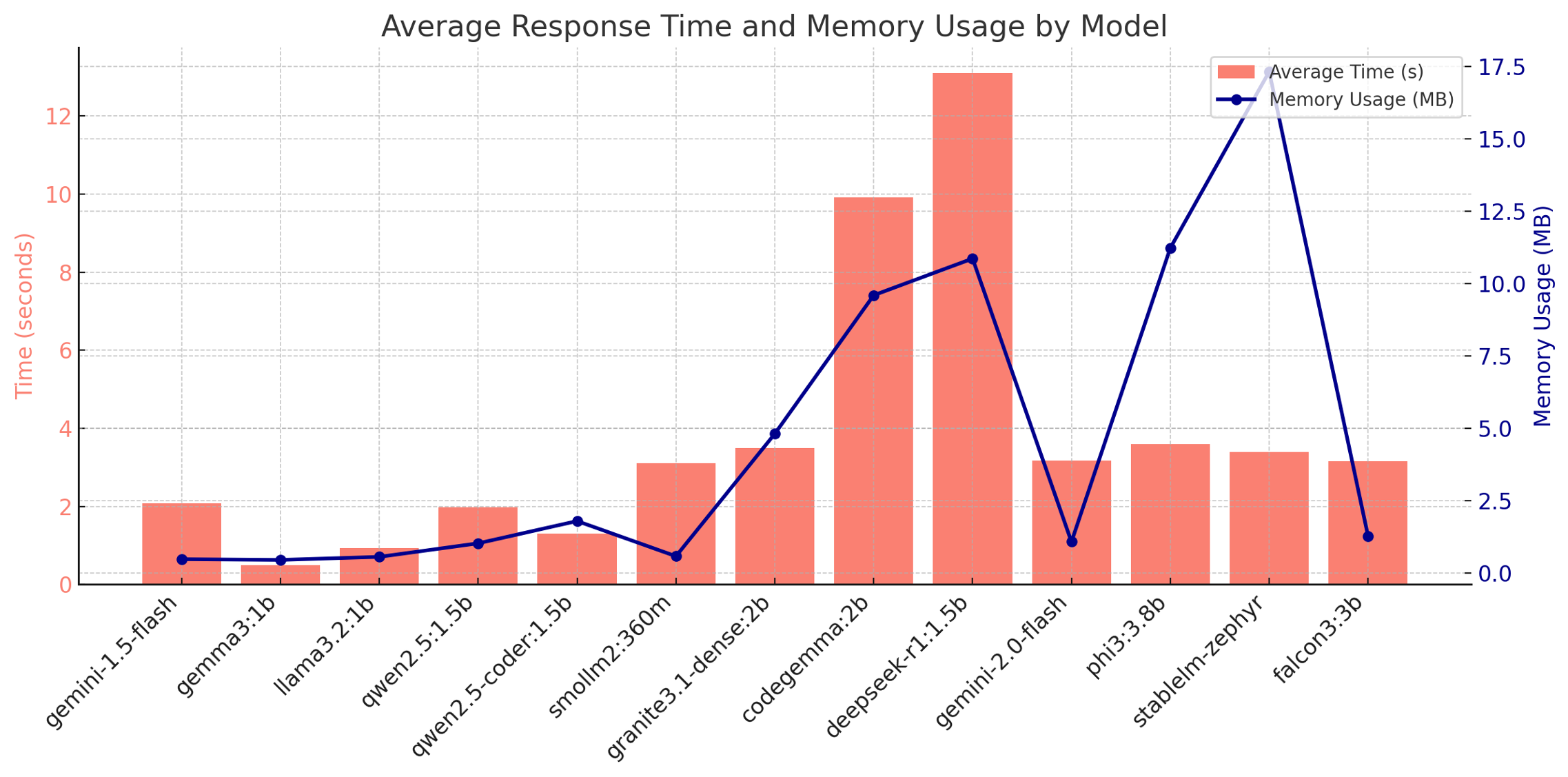

Figure 3: Mean response latency (red bars, left axis) and absolute memory overhead (blue line, right axis) per model.

Results and Analysis

The comparative analysis reveals significant variance in model performance, highlighting the trade-offs between response accuracy and resource consumption.

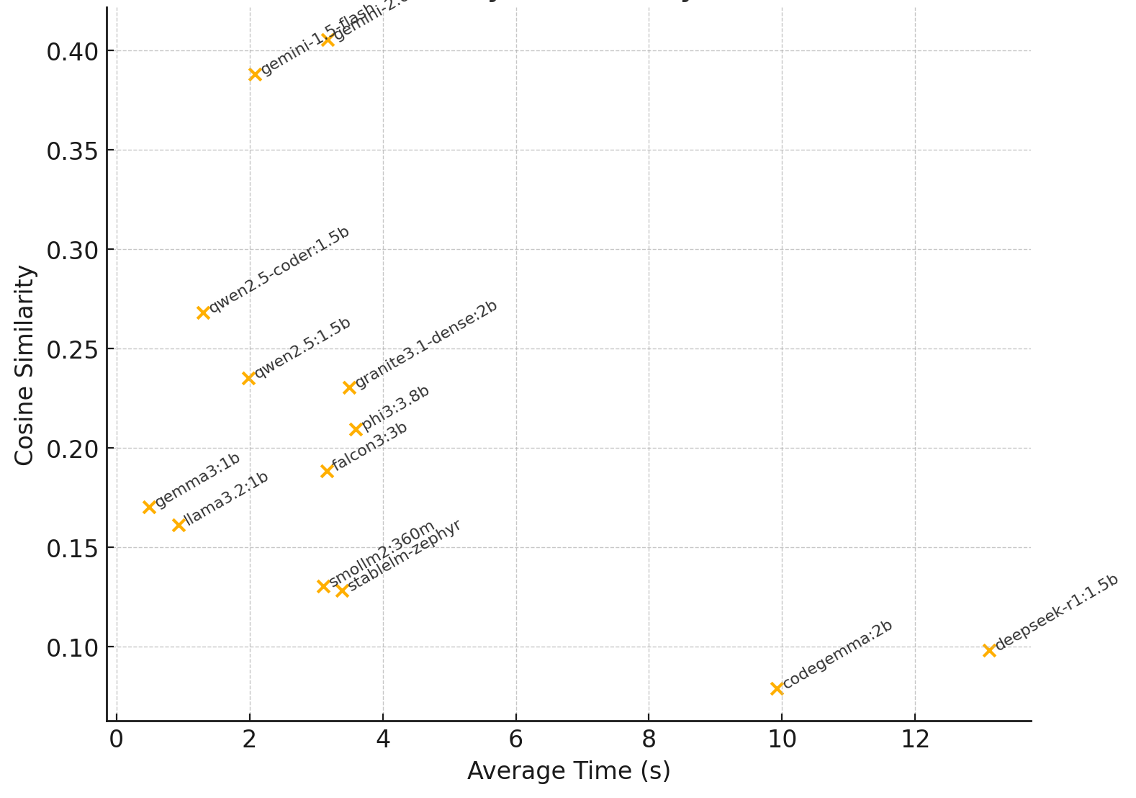

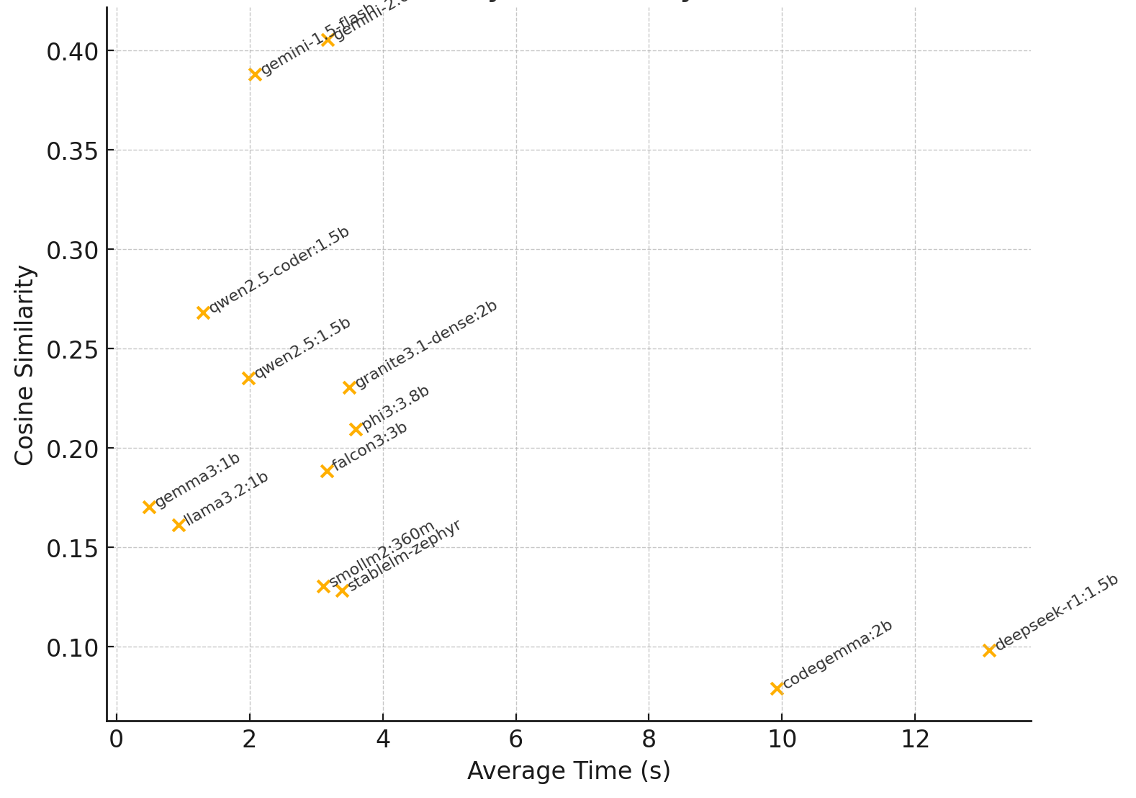

Figure 4: Trade-off between average response time and Cosine Similarity.

Models like Gemini-2.0 and Phi3-3.8B stood out for providing reliable, accurate responses with relatively moderate latency and memory overhead. Smaller models exhibited faster response times but higher rates of output hallucination and poorer accuracy. The findings demonstrate that LLM-driven honeypots can significantly enhance attacker engagement by providing more dynamic and believable system interactions than their traditional counterparts.

Practical and Theoretical Implications

LLMHoney represents a promising advancement in honeypot technology, offering a more adaptive solution for cybersecurity defense. Its ability to generate contextually appropriate outputs enhances the realism of attacker engagements, potentially leading to richer threat intelligence. However, the paper also notes the challenges associated with increased computational demands and the risks of output hallucination, which could undermine the deception if not carefully managed.

Future Directions

The paper concludes with a discussion on future research avenues, including optimizing LLM-driven honeypots through techniques like automated hallucination detection and expanding the virtual filesystem for deeper simulation. The integration of advanced adaptive strategies to counteract sophisticated attacker detection methods is also suggested. These developments are expected to improve system efficiency and effectiveness, making AI-driven honeypots viable for broader deployment in cybersecurity frameworks.

Conclusion

LLMHoney illustrates the potential of leveraging AI and LLMs within honeypot systems to create more engaging and realistic decoys that gather valuable intelligence from adversarial interactions. The findings highlight the benefits of dynamic response generation balanced with the computational and consistency challenges inherent in such a system. As AI technologies continue to evolve, LLMHoney represents an important step towards more autonomous and intelligent cybersecurity defenses.