- The paper presents closed-form fidelity expressions for hybrid protocols, establishing necessary and sufficient criteria for when combining QEC with LDD improves logical error suppression.

- It systematically compares four protocols—physical DD, QEC-only, LDD-only, and LDD+QEC—using weight enumerator polynomials to quantify performance under depolarizing noise.

- Numerical evaluations on standard codes like the Steane code demonstrate that optimal logical performance depends critically on the co-design of DD generators and decoder strategies.

Quantum Error Correction and Dynamical Decoupling: Analytical Comparison and Co-Design Criteria

Introduction

The paper "Quantum Error Correction and Dynamical Decoupling: Better Together or Apart?" (2602.19042) undertakes a systematic and rigorous analysis of the interplay between @@@@1@@@@ (QEC) and dynamical decoupling (DD), scrutinizing both their synergy and potential antagonism when these error mitigation strategies are combined. The work dissects a general hybrid protocol wherein DD is realized at the logical level (LDD) within a stabilizer code, followed sequentially by syndrome measurement and recovery, and directly compares this with protocols using either technique in isolation. Deriving closed-form, code- and decoder-dependent expressions for logical entanglement fidelity, the authors identify quantitative and checkable conditions for when combining LDD and QEC yields a genuine logical-performance improvement. Notably, the research provides not only abstract criteria but also explicit numerical evaluation on standard codes, revealing scenarios of both hybrid advantage and neutral/negative interference.

Analytical Framework and Protocol Taxonomy

The framework assumes a single "encode-wait-decode" cycle under an effective depolarizing Pauli noise model, optionally interleaving (L)DD operations during the idle period. The analysis encompasses four principal strategies:

- DD-phys: Physical DD on k unencoded qubits (serving as a reference baseline).

- QEC-only: Syndrome-based error correction with no DD.

- LDD-only: Logical DD on the code, followed by syndrome measurement but without coherent recovery.

- LDD+QEC: Hybrid protocol: logical DD followed by QEC.

Errors are described via weight enumerator polynomials (WEPs) over the phase-stripped n-qubit Pauli group. The error model incorporates parameters p (single-qubit error rate), λ (DD suppression factor, $0$ is ideal, $1$ is no suppression), and ϵ (recovery failure rate, allowing interpolation between perfect and fully random recovery). These key ingredients permit explicit fidelity formulas for any stabilizer code, recovery map, and LDD group.

Main Theoretical Results

The authors derive analytical expressions for logical fidelity (probability of no net logical error, equivalently, entanglement fidelity) of each protocol as functions of WEPs, evaluated at z=p/(3−3p). For example, the logical error probability for LDD+QEC is expressed as

1−Fhyb=U+S1{[EU+ϵ(CU−4−kDU)]+[λES+ϵλ(CS−4−kDS)]}

where U (unsuppressed) and S (suppressed) subsets are canonically defined via commutation with the LDD group, and C, D, etc. are code/decoder-specific error classes.

Necessary and Sufficient Criterion for Hybrid Advantage

For perfect recovery (ϵ=0), LDD+QEC offers a fidelity improvement over QEC-only if and only if the conditional fraction of uncorrectable errors is larger in the suppressed (by LDD) Pauli sector than in the unsuppressed sector: SES>UEU

Here, ES is the suppressed uncorrectable error weight, S is the total suppressed error weight, etc. This generalizes to any code, LDD group, and recovery map.

Sufficient Low-Noise Rule: Minimal Weight Suppression

In the limit p→0 (i.e., the logical error rate is dominated by minimal-weight faults), if LDD suppresses any minimal-weight uncorrectable Pauli error, then LDD+QEC outperforms QEC-only for all sufficiently small p (for fixed λ<1). This is formalized via the weights α (minimal uncorrectable weight) and β (minimal weight for such errors in the suppressed sector).

Robustness to Recovery Imperfection

When recovery is imperfect but ϵ>0 is small, any strict advantage present for ϵ=0 persists for a nonzero interval of ϵ due to continuity of the fidelity expressions.

Decoder and Generator Co-Design

The optimality of LDD+QEC depends not only on the code but crucially on the choice of LDD generators and the decoder. Stabilizer dressing of LDD generators can be exploited to ensure that critical low-weight uncorrectable errors reside in the suppressed sector, thus enforcing the sufficient condition for hybrid advantage. In particular, dressing the logical generator g by a stabilizer S such that some chosen uncorrectable error anticommutes with gS can ensure β′=α.

Numerical Analysis and Empirical Regimes

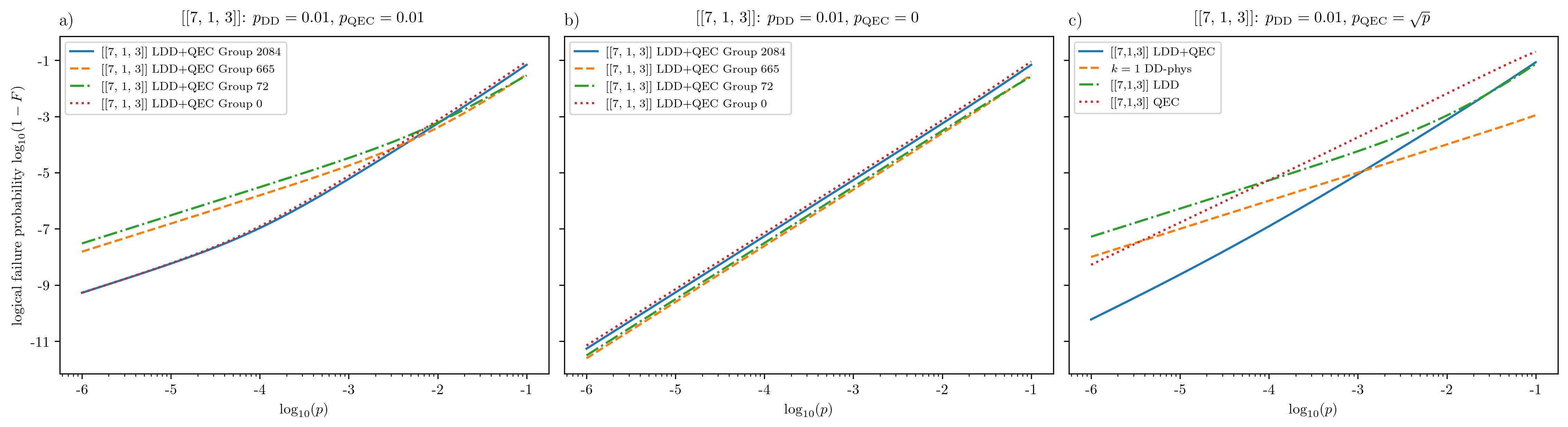

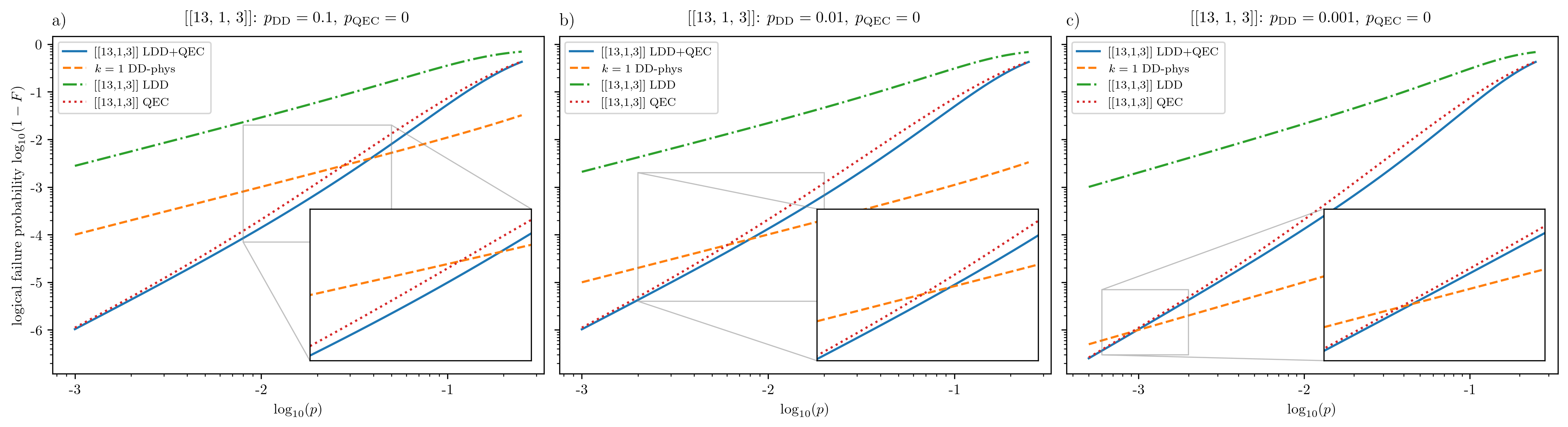

Comprehensive numerical evaluation of the analytic formulas is undertaken for the [[7,1,3]] Steane code and a [[13,1,3]] code, enabling mapping of logical failure rates and relative improvement metrics R across (λ,ϵ) (and p) parameter planes.

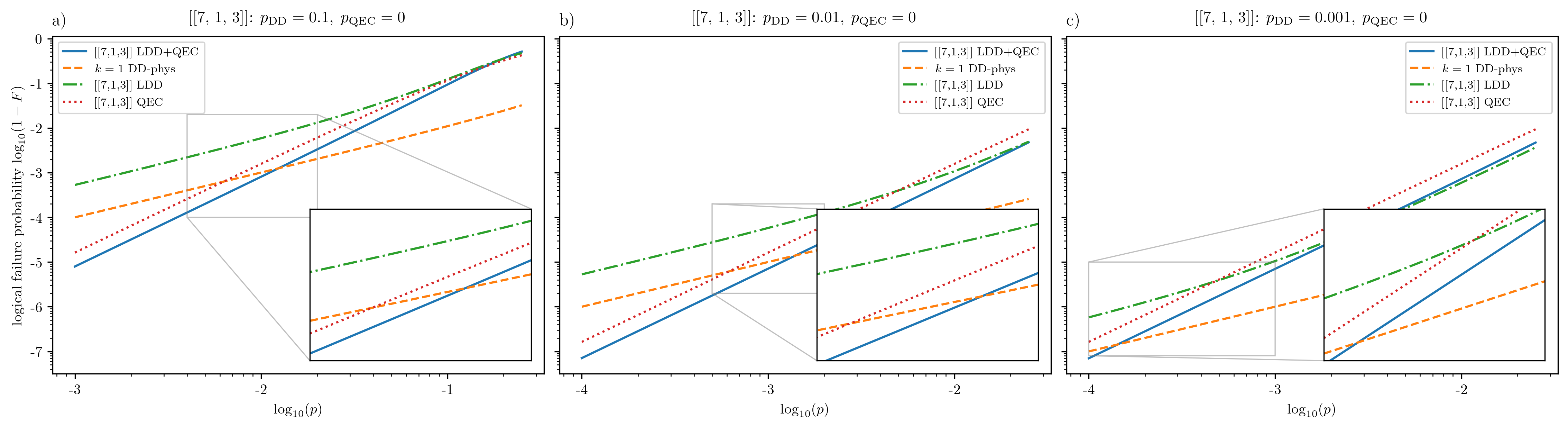

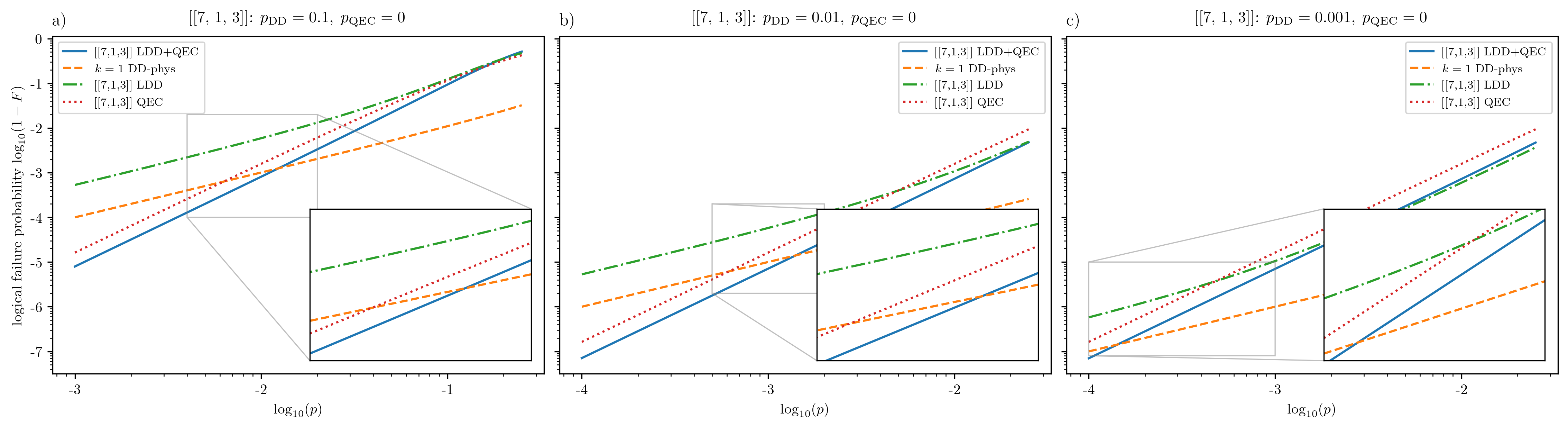

Figure 1: Logical failure probability for LDD+QEC, QEC-only, LDD-only, and physical DD for the [[7,1,3]] code; LDD+QEC shows superior low-p scaling when the sufficient condition holds.

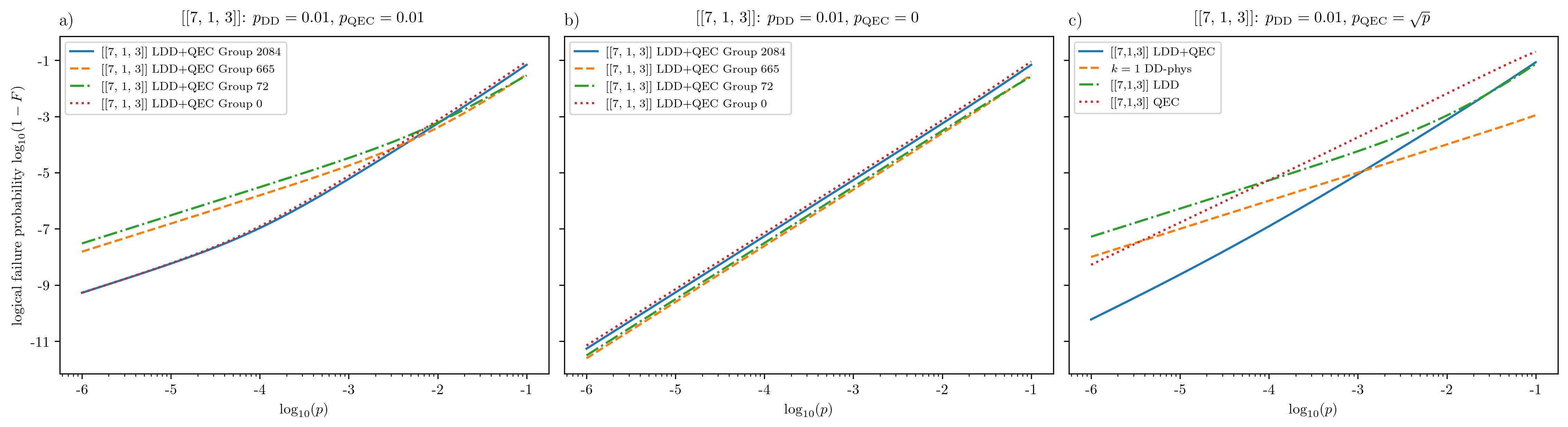

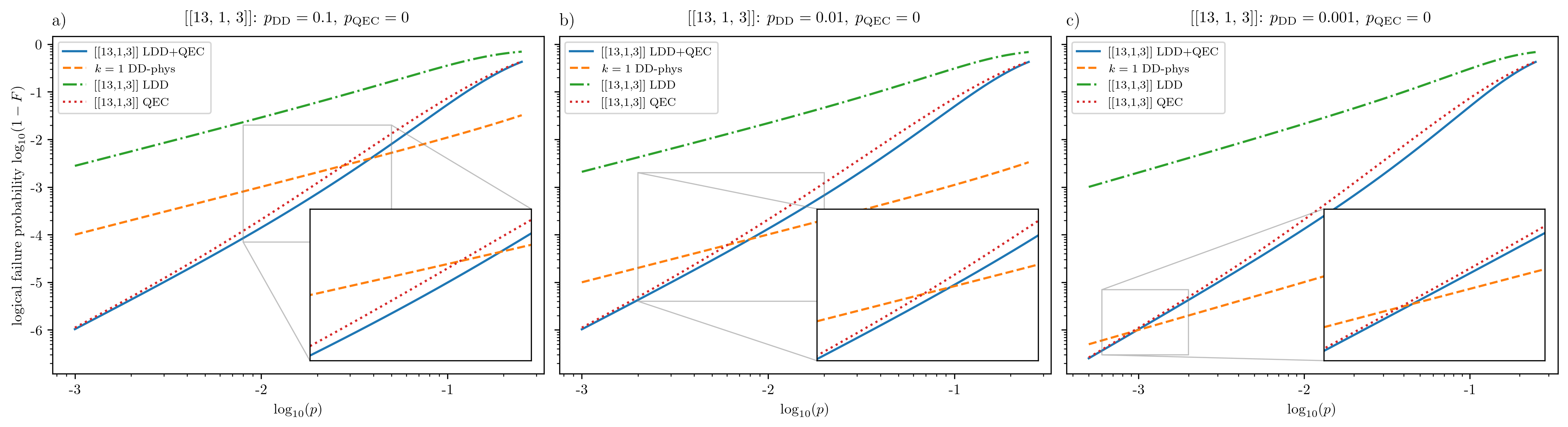

Key numerical findings include:

- In the low-p, perfect-recovery regime, when the code and LDD group are co-designed so that some minimal-weight uncorrectable errors are suppressed, LDD+QEC achieves strictly lower logical failure rates than QEC-only, matching theoretical predictions.

- When the sufficient condition is not met (i.e., the dominant uncorrectable errors commute with LDD), the asymptotic advantage disappears; LDD+QEC and QEC-only converge.

- Imperfect recovery (ϵ>0) fundamentally reshapes logical failure hierarchies: suppression of low-weight (even correctable) errors confers more benefit than targeting only rare uncorrectable events.

- In all regimes, the best LDD generator depends on both decoder structure and anticipated ϵ, reinforcing the need for co-design.

- The region in (λ,ϵ) space where hybrid protocols dominate expands as p decreases, but is not guaranteed to be universal.

Figure 2: Dependence of LDD+QEC logical failure probability on choice of LDD generator and recovery imperfection ϵ; different LDD groups are optimal at low, intermediate, and high p.

Figure 3: Relative improvement R of LDD+QEC versus QEC-only and physical DD in (λ,ϵ) space for the [[7,1,3]] code; green indicates LDD+QEC is favored.

Figure 4: Logical failure for [[13,1,3]] code with LDD not suppressing any minimal uncorrectable error; LDD+QEC and QEC-only curves coincide asymptotically.

Quantum Error Detection Generalization

The analysis extends to QED protocols (accepting outputs only on trivial syndrome). Here, the comparison is richer due to the two-dimensional performance space: conditional logical fidelity and acceptance probability. The WEP formalism yields analogous necessary and sufficient criteria, and partial orderings of protocol pairs are established using fidelity excesses.

Implications and Future Directions

This study provides strong evidence that the benefit of hybrid LDD+QEC is contingent, rather than automatic: it is realized when the logical DD explicitly targets the decoder's logical vulnerabilities. Importantly, the design space is not universal; for a given code and hardware, meticulous co-design of logical operators, LDD generators, and decoder selection is paramount. The analytic (WEP-based) treatment allows for rapid evaluation and optimization over this space.

Practically, the insights guide fault-tolerance protocol design for near-term and intermediate-scale quantum computation, especially where cycle-level QEC is performed with non-negligible syndrome errors and where hardware supports flexible logical control. The framework also clarifies that systemic advantage from LDD is not guaranteed simply by concatenation or by naive overlay; misalignment between suppressed errors and decoding weaknesses can render hybridization ineffectual.

Moving forward, the framework is positioned to accommodate more general noise models (e.g., biased noise, coherent errors), syndrome measurement circuits with correlated faults, and the integration of control-induced errors into the effective λ parameter. Another extension is the inclusion of multi-round QEC, where interleaving syndrome extraction and LDD could yield novel synergistic (or antagonistic) effects.

Conclusion

The paper delivers an authoritative, unified treatment of quantum error suppression protocols, exposing necessary and sufficient conditions for hybrid LDD+QEC benefit. By establishing closed-form fidelity metrics indexed to WEPs, and confirming theoretical criteria across multiple codes and regimes, the work elevates the discourse on QEC/DD integration from heuristic intuition to rigorously grounded design principles. The core implication: co-design of code, decoder, and logical DD group is essential, and underpins the quantitative hierarchy of logical error suppression achievable in realistic quantum devices.