- The paper introduces AutoModel, a novel agentic architecture that replaces static recommender pipelines with self-evolving agents managing features, models, and resources.

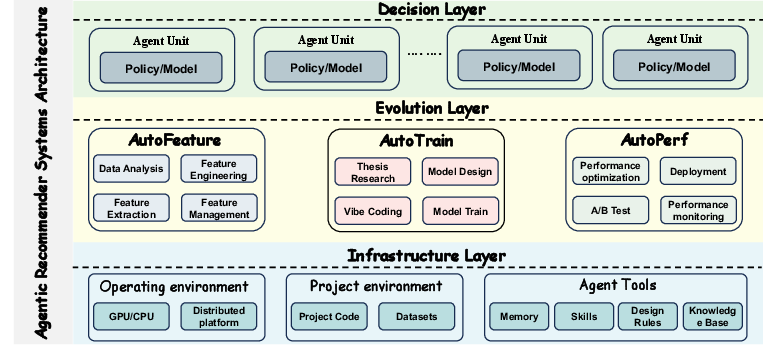

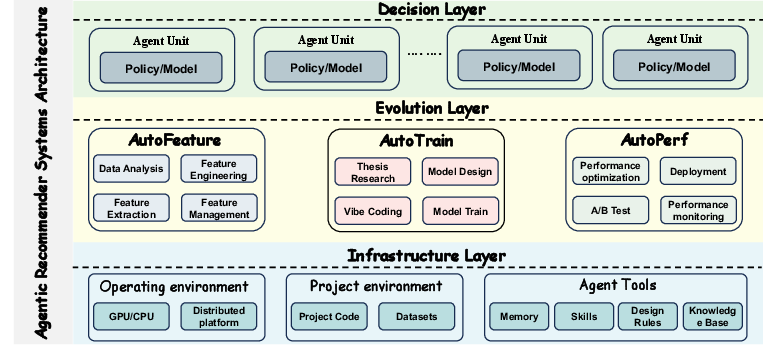

- It presents a multi-agent design—AutoFeature, AutoTrain, and AutoPerf—that integrates perception-decision loops for continuous adaptation and scalability.

- The case study demonstrates automated paper-to-model reproduction, reducing manual overhead and enabling robust, scalable transitions in dynamic environments.

AgenticRS-Architecture: Design and Implications for Agentic Recommender Systems

Motivation and Problem Statement

The paper addresses the structural limitations of current industrial recommender systems, which are predominantly managed as static, human-centric pipelines separated along recall, ranking, and policy modules. This rigidity inhibits their adaptability to heterogeneous data regimes and fast-evolving objectives, which is increasingly a bottleneck for large-scale commerce and content platforms. The authors propose AutoModel, an agentic architecture organizing recommendation as a set of evolution agents equipped with long-term memory and self-improvement mechanisms. These agents encapsulate major decision axes—models, features, resources—allowing for locally automated, yet globally coordinated evolution.

Architectural Overview: Multi-Agent Design

AutoModel replaces traditional pipelines with three persistent agents:

- AutoFeature: Handles data profiling, feature candidate generation, and selection; integrates online feedback to adapt feature sets dynamically.

- AutoTrain: Automates model architecture search, training, and offline evaluation; closes the loop from literature mining to code instantiation and iterative model refinement.

- AutoPerf: Manages training/inference resources, deployment, and A/B experimentation; enforces risk boundaries and optimizes for resource and business constraints.

A coordination layer decomposes high-level intents into cross-agent workflows, managing task graphs, failure recovery, and termination. The knowledge layer maintains unified metadata, storing configurations, experimental outcomes, and reward signals for cross-agent credit assignment and reuse.

Figure 1: The architecture of agentic recommender systems, showing interconnected evolution and decision agents operating across lifecycle stages.

Agent-Centric Evolution: Lifecycle Management

AutoModel's agent boundaries align with core lifecycle stages, making explicit the evolution interfaces for each decision space. Each agent executes a perception-decision-execution-feedback loop, exposing stable interfaces for optimization strategies such as RL and LLM-based architectural proposals. By transforming lifecycle metadata (model variants, features, training runs, A/B online tests) into queryable, linked representations, the system enables independent evaluation and persistent memory of past experiments—addressing reusability and credit assignment in large-scale deployments.

Agent Responsibilities

- AutoFeature evolves representations by diagnosing data issues and proposing feature sets aligned with business objectives and downstream requirements. Feature plans are evaluated in conjunction with model variants produced by AutoTrain.

- AutoTrain manages model variant search, leveraging both business signals and literature-derived method blueprints. Its agentic loop includes method parsing, code adaptation, standardized training, and offline benchmarking, narrowing search spaces through accumulated performance and configuration records.

- AutoPerf decides deployment configurations, allocates compute and memory, selects experiment policies, and tracks risk metrics. It functions as the gatekeeper for online rollout, feeding deployment and risk signals back for further agentic optimization.

AutoTrain Case Study: Automated Paper-to-Model Reproduction

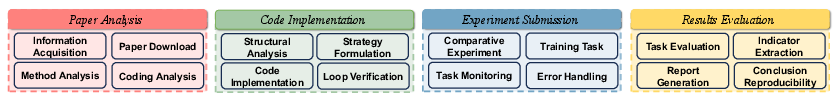

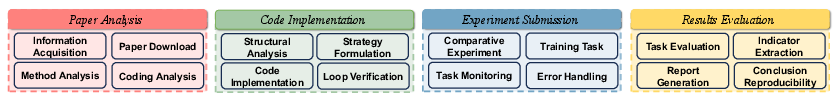

The paper details an instantiation—paper_auto_train—illustrating how AutoTrain automates the costly process of reproducing research methods and integrating them into production recommenders. The pipeline decomposes reproduction into four phases:

- Paper Parsing and Method Abstraction: Utilizes an LLM sub-agent to convert textual paper inputs (title, identifier, HTML/PDF URL) into structured method descriptions, including architecture, input/output, loss function, and expected results. This blueprint is mapped onto internal baseline models.

- Code Analysis and Implementation: Employs code analysis and rewriting sub-agent to pinpoint insertion points in the codebase, translating pseudo-code to valid API calls, and generating baseline and paper-driven model variants.

- Training Submission and Monitoring: Orchestrates distributed training jobs, continuously monitors relevant loss curves and stability, and provides automated remediation (learning rate, batch size adjustments) when anomalies are detected.

- Result Comparison and Reporting: Applies a unified evaluation suite (AUC, NDCG, recall, user/content slices), producing human-readable summaries and structured recommendations. Negative or non-transferable cases are tracked as reusable assets to prevent redundant future attempts.

Figure 2: The pipeline of paper_auto_train, automating method parsing, code adaptation, training, and evaluation from research literature.

The case study demonstrates successful integration and benchmarking of the NeurIPS 2025 Best Paper "Gated Attention for LLMs: Non-linearity, Sparsity, and Attention-Sink-Free", including automatic translation of head-specific, query-dependent sigmoid gating into the production attention module. The agent tracks all steps and results, creating reproducible records and transparent execution traces.

Implications and Future Directions

This agentic system architecture provides a framework for end-to-end lifecycle automation and co-evolution of large-scale industrial recommenders. By structuring independent, explicitly evaluable agents with unified memory and coordination, AutoModel enables scalable experimentation, rapid transfer of academic advances, and persistent accumulation of operational knowledge. The architecture’s reward layering (inner and outer) facilitates credit assignment and efficient convergence across evolving business constraints and modeling paradigms.

The principles and interfaces defined here are directly transferable to related domains such as search, advertising, and broader AI system orchestration. Potential future developments include deeper integration of RL-driven policy adjustments, agentic meta-learning across multi-domain business objectives, tighter coupling with foundation model architectures, and cross-system knowledge sharing via federated agent networks.

Conclusion

AgenticRS-Architecture formalizes the design of agentic recommender systems, moving away from static pipelines toward persistent, self-evolving agent graphs coordinating model, feature, and resource evolution. The delineation of AutoFeature, AutoTrain, and AutoPerf, united by shared coordination and knowledge layers, enables both automation and alignment across lifecycle stages. The concrete realization via paper_auto_train illustrates substantial reduction in manual overhead and more robust model transfer. The architectural constructs and agent interfaces outlined offer scalable paths for next-generation recommenders, with broad applicability across composite AI systems (2603.26085).