- The paper presents a novel dataset and classification framework for beehive sound recognition, using both SVM and CNN techniques.

- The study leverages MFCCs, Mel spectra, and normalization strategies to optimize feature extraction and classifier performance.

- Experimental findings reveal CNN advantages in context utilization while highlighting challenges in generalizing across diverse beehive environments.

Machine Learning Approaches for Beehive Sound Recognition

Introduction

The paper "To bee or not to bee: Investigating machine learning approaches for beehive sound recognition" (1811.06016) explores the application of machine learning methodologies to automate the recognition of beehive sounds, aiming to enhance beekeeping practices through continuous monitoring of hive conditions. The study emphasizes the distinction between sounds produced by bees and extraneous noises captured within hives, employing both support vector machines (SVM) and convolutional neural networks (CNN) as primary classifiers. One significant contribution is the creation of a labeled dataset, enhancing the resources available for developing robust sound recognition systems in computational bioacoustic scene analysis.

Dataset and Annotation Procedure

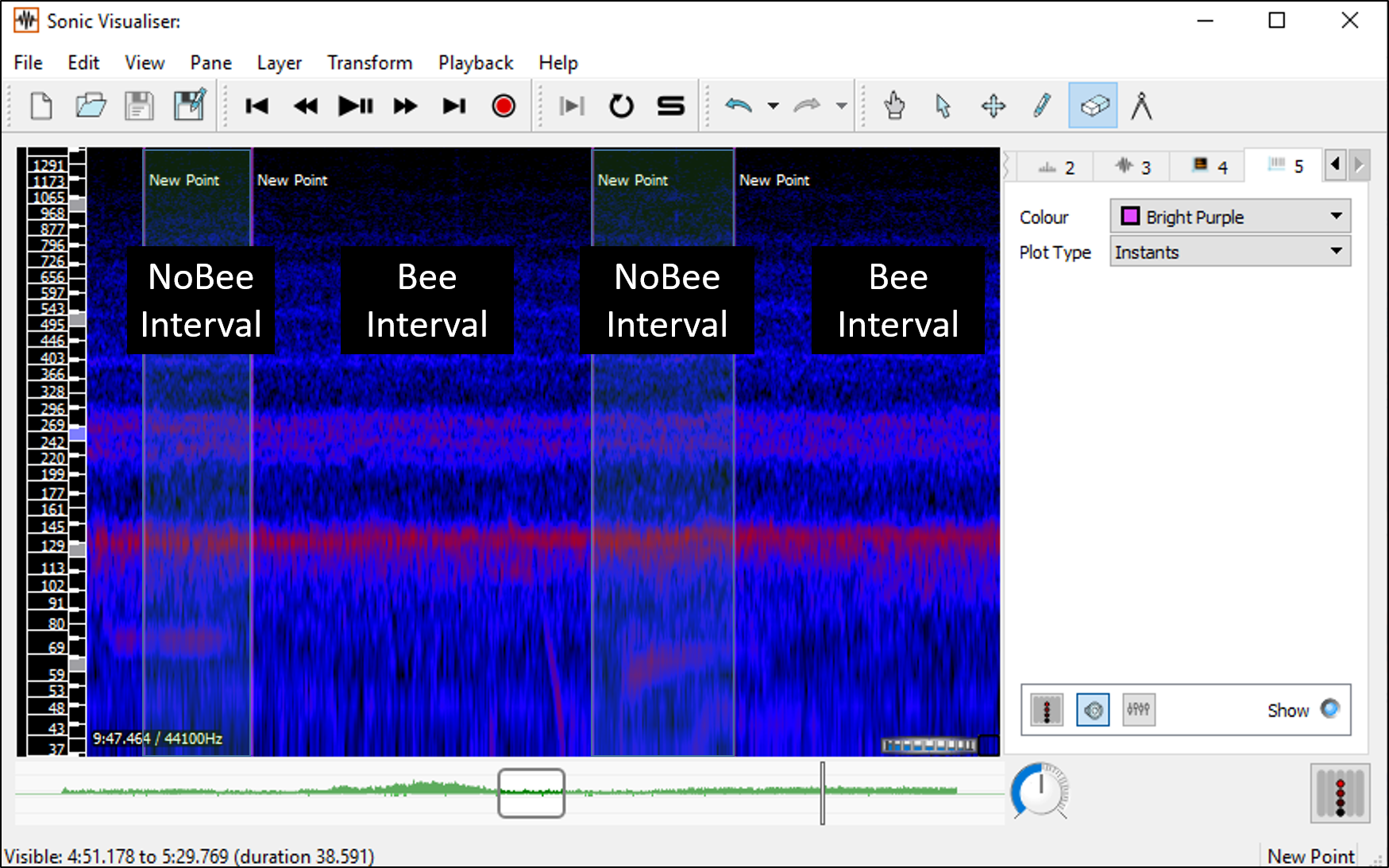

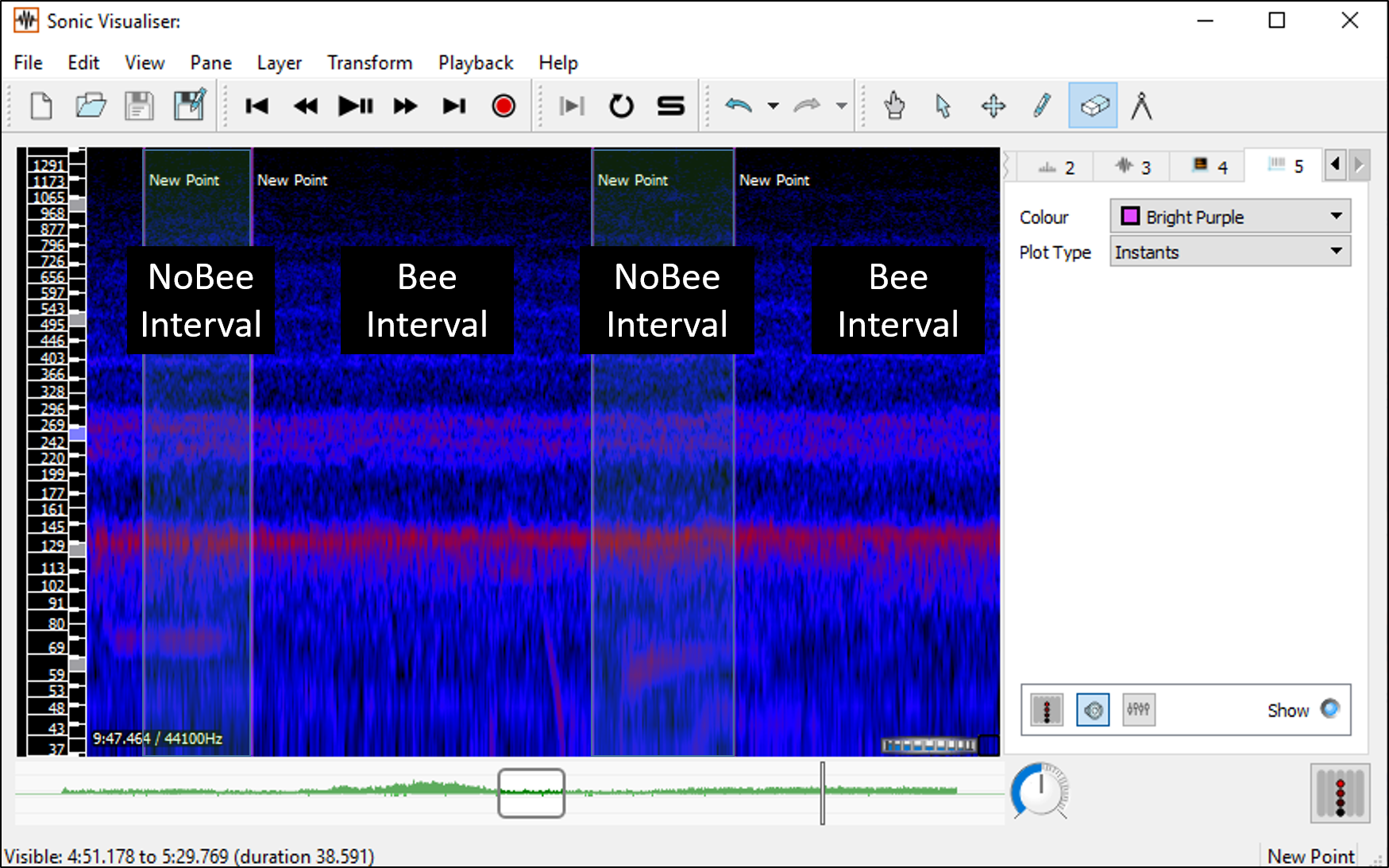

The dataset creation involved annotating audio recordings sourced from the Open Source Beehive (OSBH) project and the NU-Hive project. These recordings were diversified in terms of environmental conditions, geographic regions, and recording equipment, challenging the classifiers' generalization capabilities under varied field circumstances. Annotation was conducted by non-specialists using auditory cues and log-mel-frequency spectrum visualization to identify and label segments containing external sounds (Figure 1).

Figure 1: Example of the annotation procedure for one audio file.

A balanced dataset was curated, consisting of 78 annotated recordings totaling approximately 12 hours, where 25% of the data was labeled as external non-beehive sounds. This dataset is publicly accessible, coupled with auxiliary tools for experimental flexibility.

Methodology

Preprocessing

The audio data underwent preprocessing at a 22,050 Hz sample rate. Segments were normalized to predefined lengths to standardize analysis approaches. Labels were assigned based on the occurrence of external sound segments, implementing various segment size parameters and thresholds to evaluate classifier sensitivity and balance.

SVM Classifier

The SVM classifier was evaluated under multiple configurations involving different kernels, feature types, normalization strategies, segment sizes, thresholds, and splitting methods. Features such as MFCCs and Mel spectra were central to classification tasks, while normalization varied from no normalization to dataset-level z-score adjustments. The best-performing combination was determined empirically, considering accuracy across diverse setups.

CNN Classifier

The CNN approach adapted the Bulbul implementation, incorporating four convolution layers followed by dense layers, optimally configured for sound detection challenges akin to those faced in the Bird Audio Detection task. Mel spectra and data augmentation strategies further enriched the CNN's capability to distinguish beehive sounds.

Experimental Results

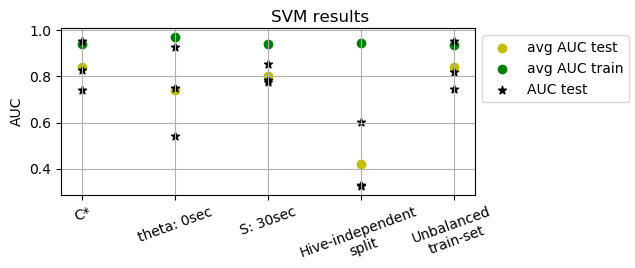

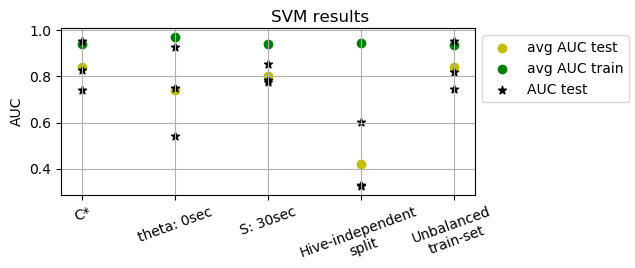

SVM Results

Evaluations showed the SVM's proficiency in detecting beehive sounds, though performance varied with data splitting strategies and data imbalances. Hive-independent splits highlighted weaknesses in generalization to new hives, which the SVM struggled to overcome consistently.

Figure 2: SVM results on the test set for each of the 3 runs (star), using the AUC score.

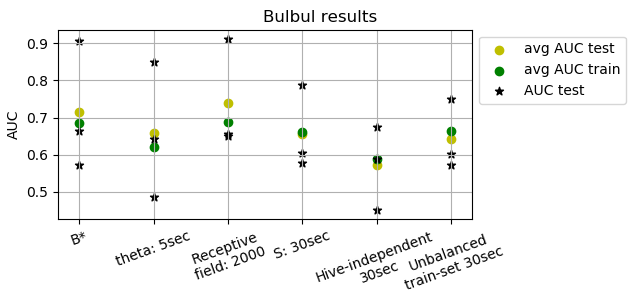

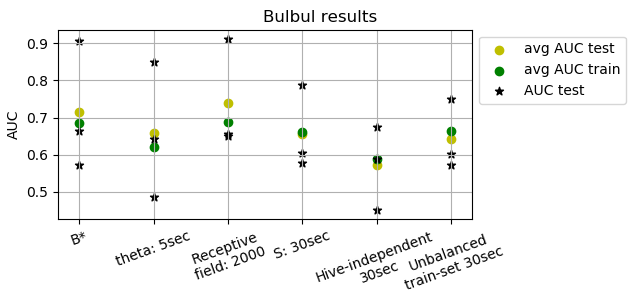

CNN Results

CNN experiments demonstrated the need for adjusting receptive fields and segment sizes, showing improved context utilization for sound classification. However, CNNs faced limitations in transcending dataset-specific features, particularly when tasked with generalizing across unseen hives.

Figure 3: Results for the Bulbul CNN using the AUC score, for each of the 3 runs (star).

Conclusion

The study provides a foundational effort towards the development of automated beehive sound recognition architectures, shedding light on data requirements, classifier configurations, and generalization challenges. While neural networks showcased potential, their practical implementation within distinct beehive environments necessitates further refinement, especially concerning generalization capabilities. Future research directions include expanding the annotated dataset size and enhancing annotation accuracy through expert validation. These advancements could significantly aid the beekeeping industry by enabling remote hive health surveillance, potentially influencing strategies against ecological threats facing bees. Overall, the paper advocates for enhanced intersection between machine learning and bioacoustics research as a conduit for ecological monitoring technology advancements.