- The paper demonstrates that combining MFCCs, Mel spectrograms, and HHT features enhances the classification of beehive states using SVMs and CNNs.

- The study employs a two-step process of feature extraction and classification, achieving improved AUC scores in controlled experiments.

- The research reveals CNN challenges in generalizing across different hives, underscoring the need for more robust datasets and advanced models.

Audio-Based Identification of Beehive States

Introduction

This essay examines the paper "Audio-based identification of beehive states" by Inês Nolasco et al., which explores the use of audio analysis for monitoring the state of beehives. The paper highlights the potential of using support vector machines (SVMs) and convolutional neural networks (CNNs) to automatically identify different states of a beehive, particularly focusing on the presence or absence of the queen bee. The research leverages audio data from beehives, aiming to provide a non-invasive solution for beekeepers to monitor hive conditions.

Methodology

The paper proposes a two-step approach involving feature extraction and classification. The feature extraction process utilizes Mel-frequency cepstral coefficients (MFCCs), Mel spectrograms, and the Hilbert-Huang transform (HHT) to derive features that discern the frequency behavior of the beehive under various states. The classification step employs SVMs and CNNs to differentiate between these states based on the extracted features.

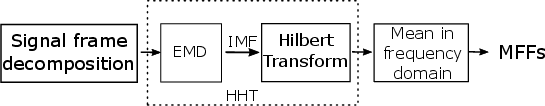

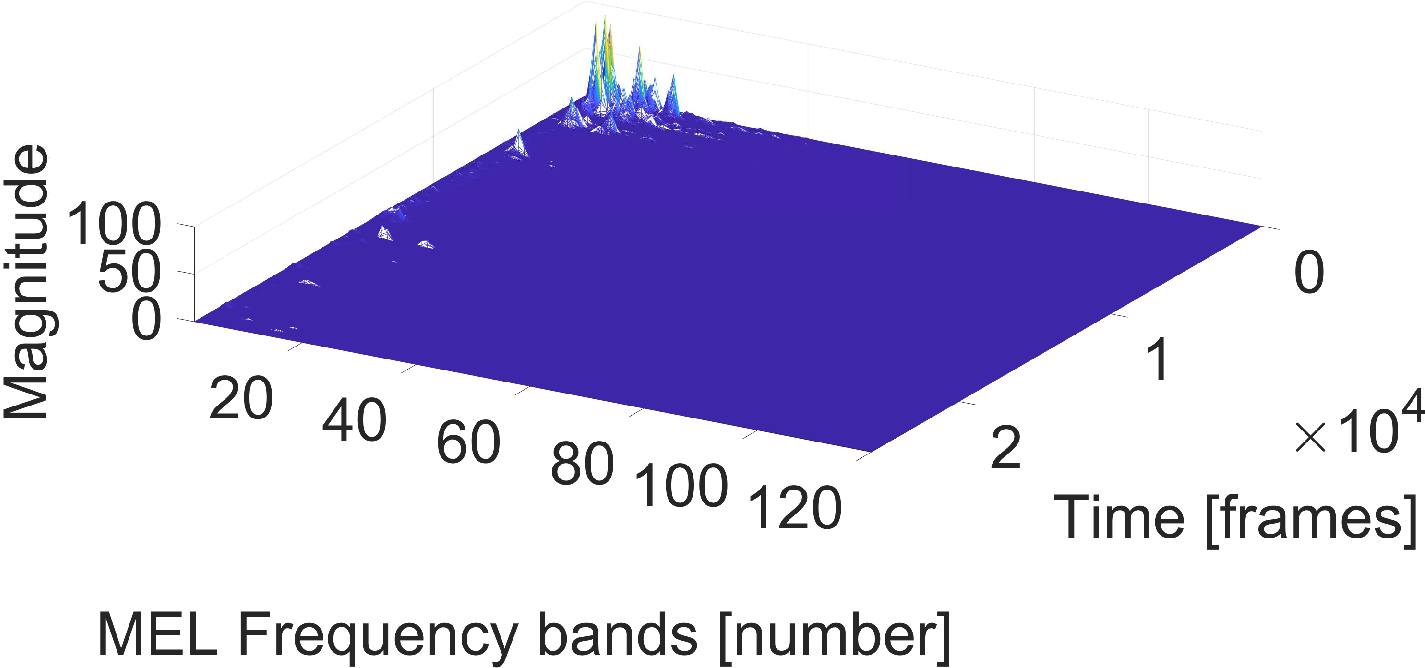

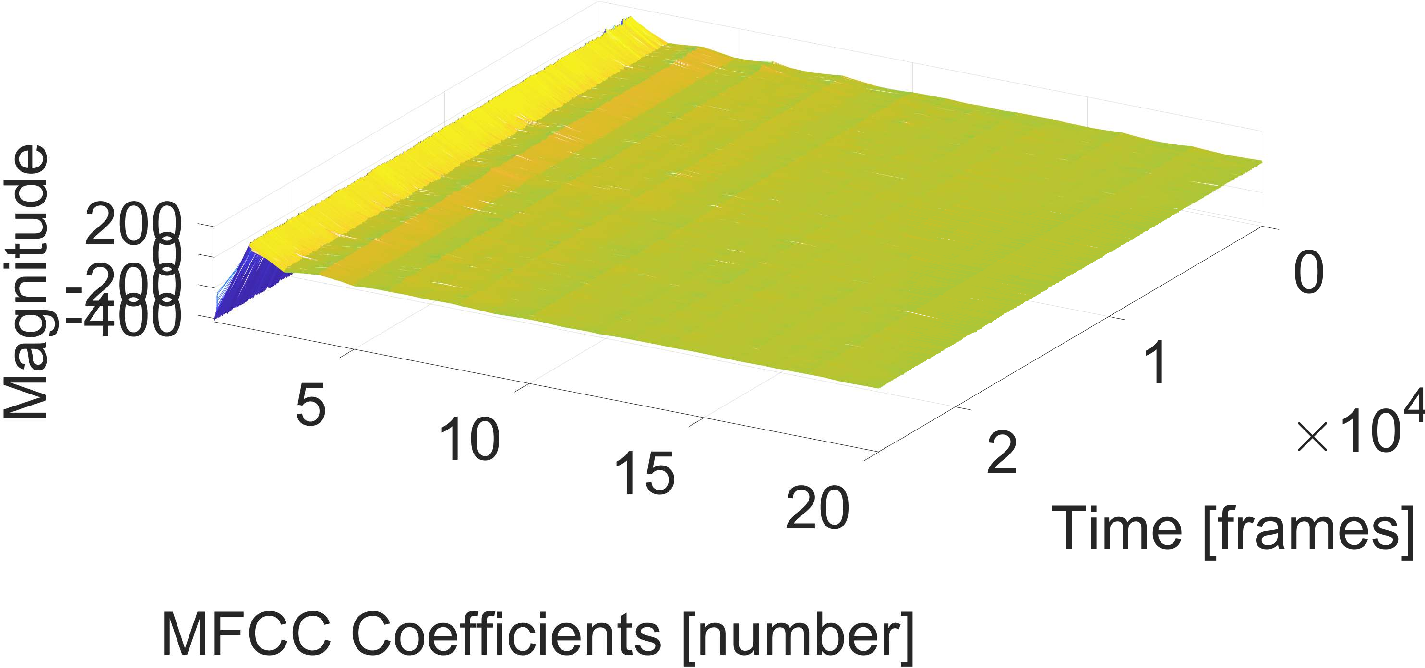

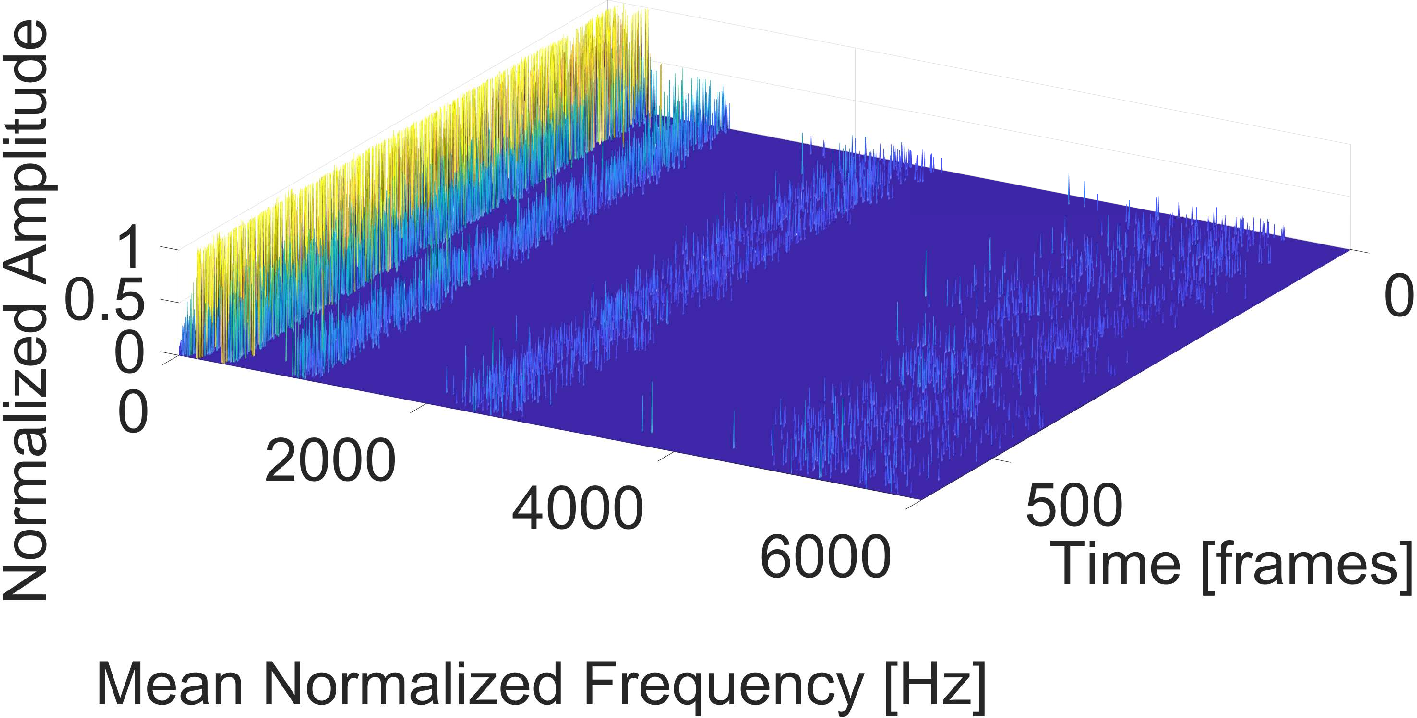

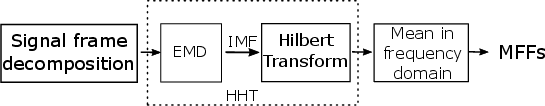

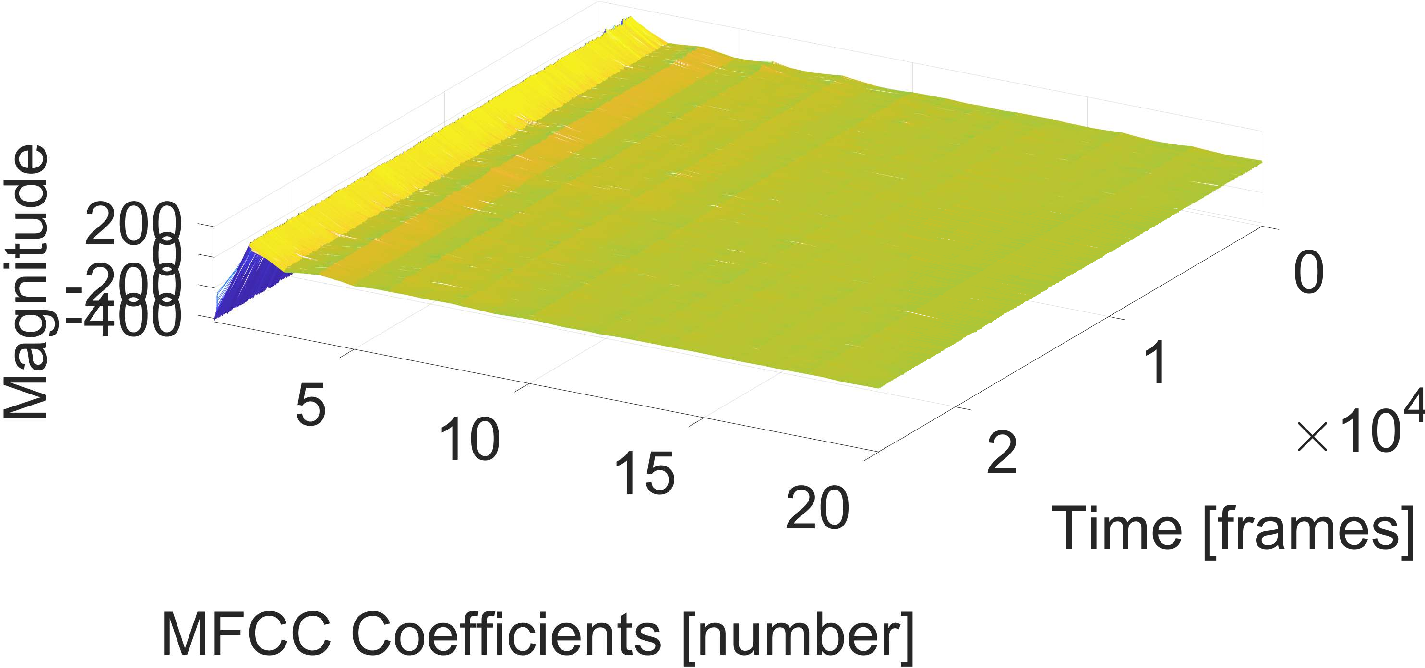

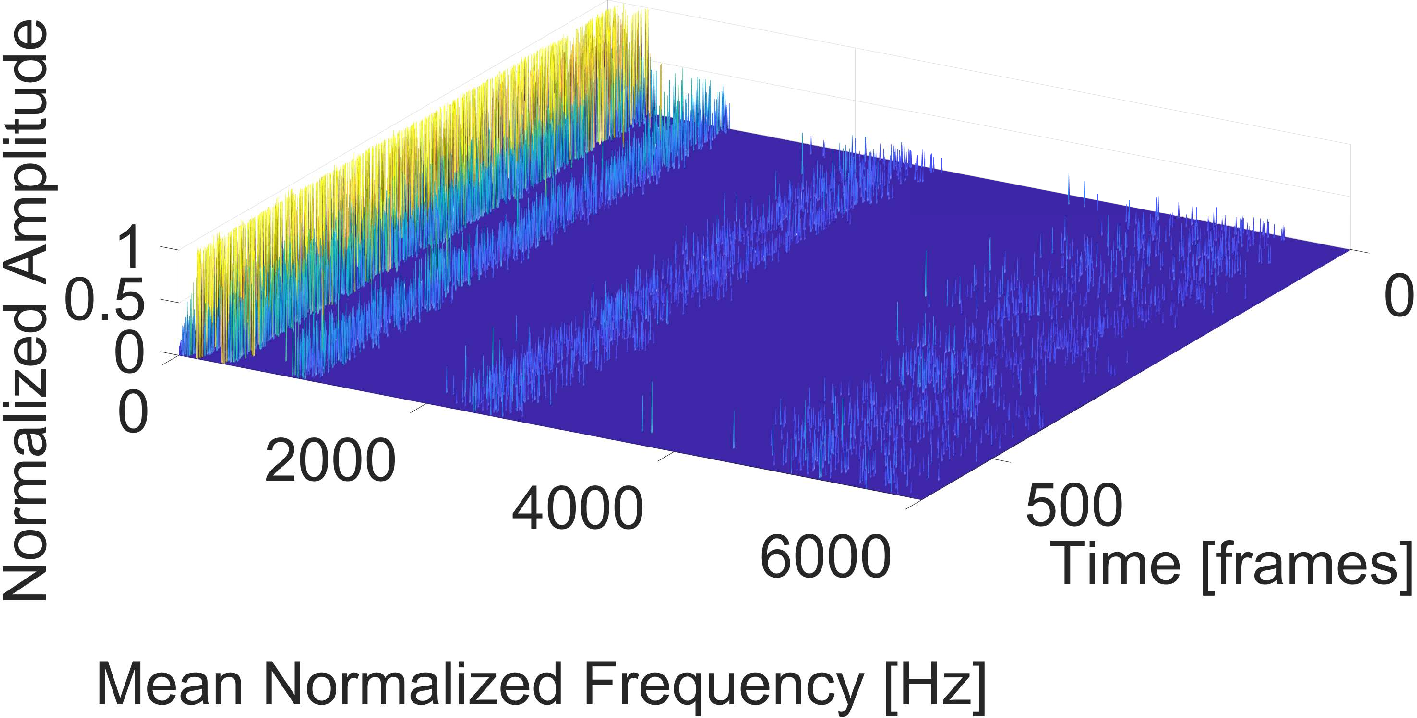

Feature extraction involves the use of MFCCs, Mel spectrograms, and HHT-based features. MFCCs and Mel spectrograms are standard in audio analysis, providing a compact representation of the power spectrum of sound. The use of HHT allows capturing non-stationary signal characteristics by decomposing signals into intrinsic mode functions and extracting features that account for instantaneous frequencies.

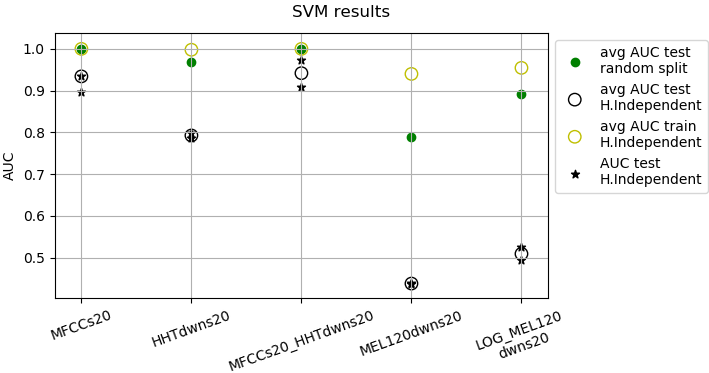

Figure 1: Feature extraction procedure based on HHT.

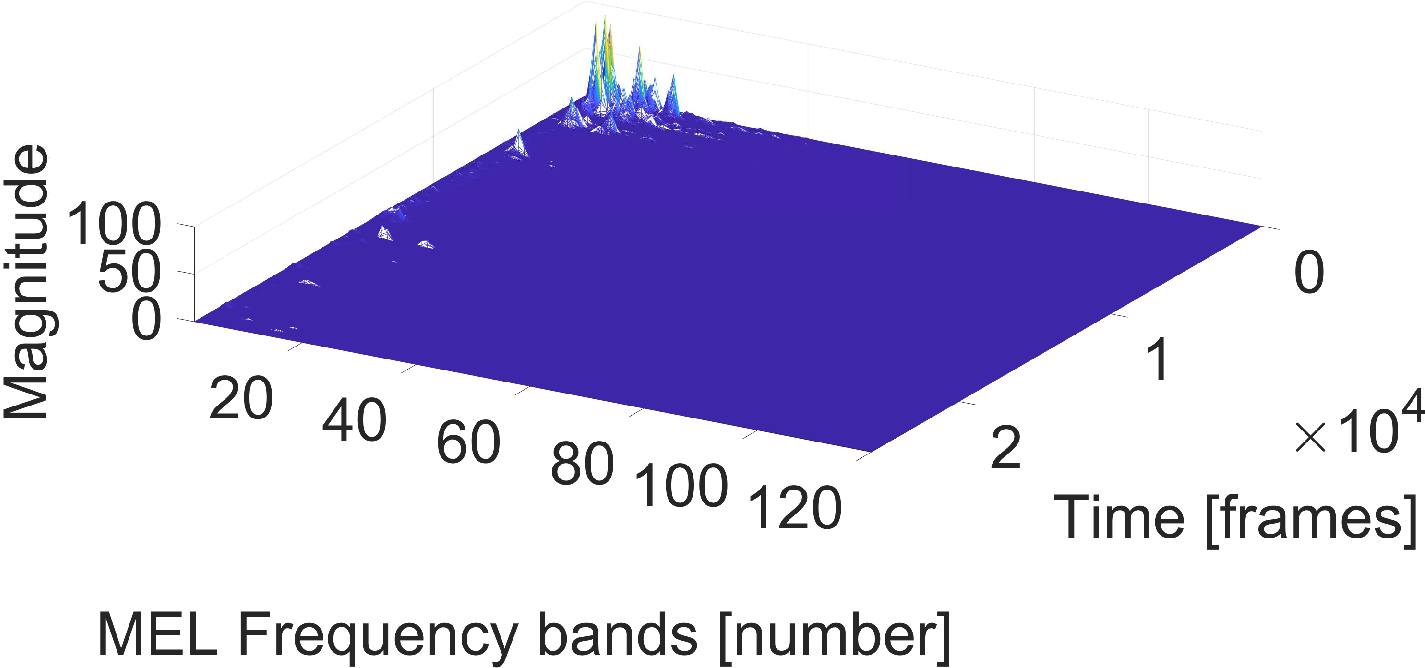

Figure 2: Comparison of feature extraction: Mel spectra, MFCCs, and HHT-based features.

Classification Techniques

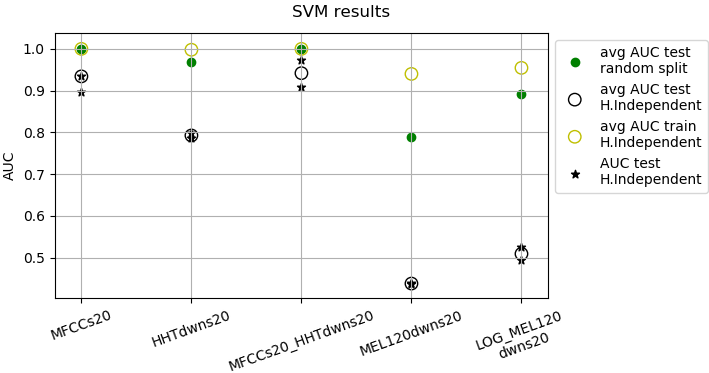

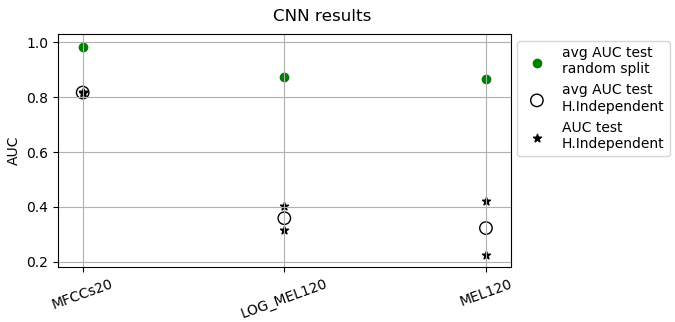

Two classification approaches are explored: SVMs with RBF kernels and CNNs. The SVM-based method integrates different combinations of MFCCs, HHT features, and Mel spectrograms, showing potency in classifying beehive states effectively, especially in controlled experimental setups. CNNs, known for their prowess in pattern recognition tasks, are employed to discern intricate patterns in audio spectrograms, albeit with mixed success in extrapolating results to unseen hives.

Evaluation and Results

The evaluation employs audio data from the NU-Hive project, analyzing two hives with distinct temporal features. The paper assesses both random splits of data and hive-independent splits to gauge generalization capabilities. The Area Under the Curve (AUC) score is used as the primary performance metric.

Figure 3: SVM results showing AUC scores for different feature combinations.

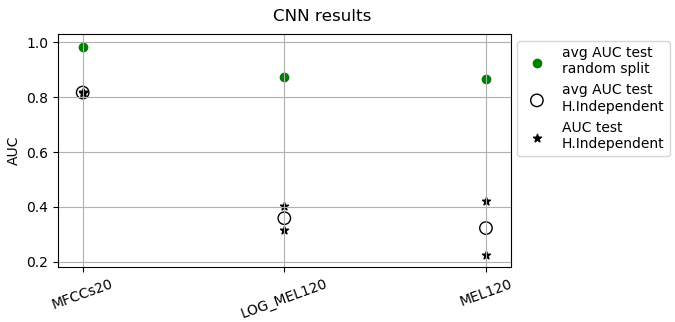

Figure 4: CNN results exhibiting varied performance on hive-independent test sets.

In the SVM experiments, combinations of HHT and MFCC features result in notable improvements in AUC scores, demonstrating their complementary nature. CNN models, while achieving high accuracy in a hive-dependent context, struggle to maintain performance across different hive conditions, underscoring the need for feature-engineered inputs or enhanced data diversity.

Implications and Future Directions

The research reveals the viability of audio-based monitoring systems in apiculture, providing a framework for future exploration into acoustic signature recognition of other hive states beyond queen presence detection. The integration of HHT features with traditional audio analysis methods shows promise in capturing complex acoustic patterns that are typical of beehive environments.

For future works, expanding the dataset with a larger variety of hives and environmental conditions could improve model robustness. Additionally, leveraging advanced deep learning architectures or hybrid models that integrate temporal context more effectively could enhance generalization to unseen hive setups.

Conclusion

The study underscores the potential of using machine learning for beehive monitoring, combining traditional feature extraction techniques with modern classification algorithms to achieve meaningful results. While challenges remain in generalizing beyond specific test conditions, the foundational framework laid out in this research offers a pathway towards more sophisticated, non-invasive hive monitoring solutions.