- The paper introduces a semi-supervised deep learning framework that non-invasively models beehive strength using audio and environmental sensor data.

- It employs a generative-prediction network with a convolutional variational autoencoder to efficiently extract and utilize sophisticated audio features.

- Results from 10-fold cross-validation demonstrate robust predictive accuracy in estimating colony health and detecting disease severity.

Semi-Supervised Audio Representation Learning for Modeling Beehive Strengths

Introduction

The study "Semi-Supervised Audio Representation Learning for Modeling Beehive Strengths" introduces an innovative approach employing semi-supervised deep learning to model beehive dynamics using audio data and environmental sensors. The motivation stems from the critical role honey bees play in ecosystem dynamics and food security, coupled with observed declines in bee populations due to environmental stressors. Traditional methods of colony management involve labor-intensive inspections that disrupt hives, highlighting the need for advanced, non-invasive monitoring technologies.

Data Collection and Preprocessing

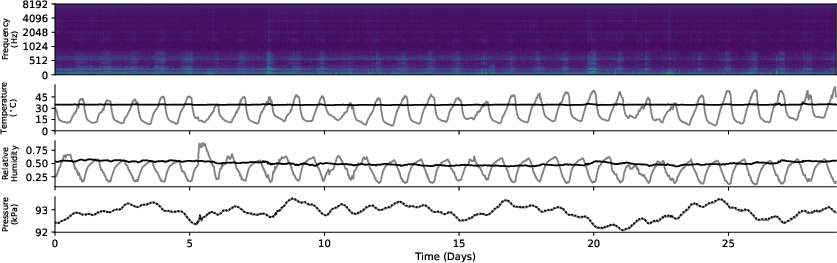

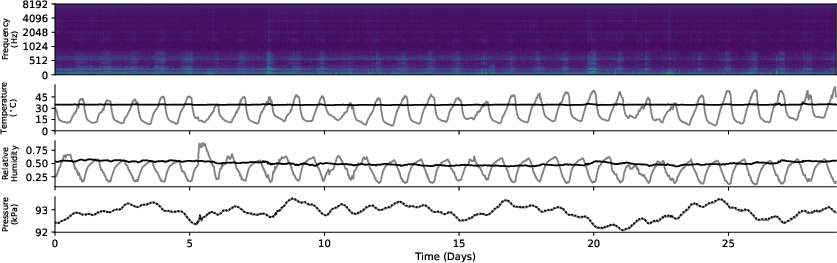

The authors executed continuous data collection, integrating custom-designed sensors within beehive setups to capture multimodal data, including audio, temperature, humidity, and pressure (Figure 1). Audio signals, recorded every 15 minutes, were transformed into mel spectrogram representations for further analysis. A focus was placed on achieving high specificity in representation by downscaling spectrogram features and pre-training with an autoencoder architecture to stabilize variational latent spaces.

Figure 1: Collected multi-modal data from our custom hardware sensor for one hive across one month.

Generative-Prediction Network Architecture

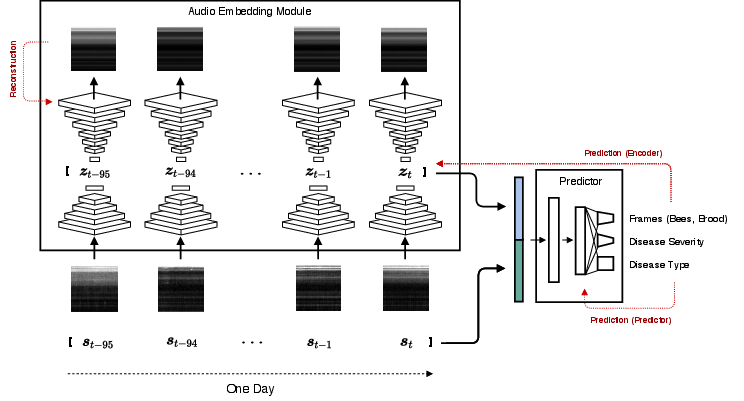

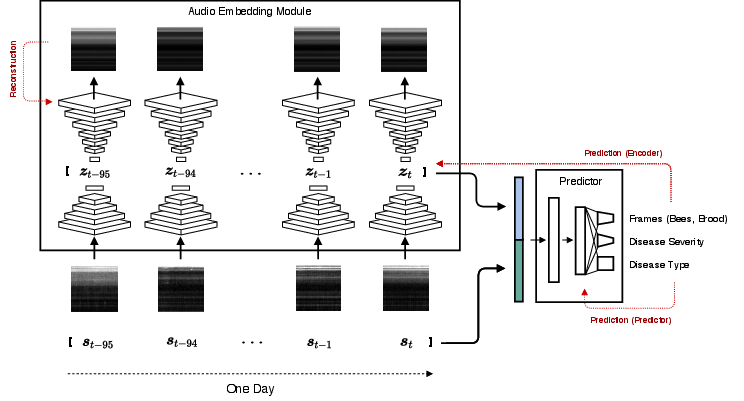

The Generative-Prediction Network (GPN) integrates hierarchical audio feature learning and predictive modeling to estimate hive strength indicators. It comprises an audio embedding module followed by a prediction network (Figure 2). The embedding module reduces dimensionality using a convolutional variational autoencoder (CVAE), providing robust feature extraction necessary for handling scarce labeled data. The predictor leverages this distilled representation alongside environmental context inputs to model the circadian dynamics and temporal patterns critical for predicting bee population metrics.

Figure 2: Hierarchical Generative-Prediction Network. s: point estimates of environmental factors (temperature, humidity, air pressure), z: latent variables.

Methodology and Model Evaluation

Crucial to the approach is its semi-supervised nature, leveraging both labeled and unlabeled data. The model's performance was evaluated using a comprehensive 10-fold cross-validation scheme, emphasizing its generalizability across unseen hives. The study also deployed ablation tests, verifying the contribution of environmental data, robustly demonstrating that exclusion of any modality like temperature impacts performance, reaffirming its critical role in bee activity modeling.

Results and Analysis

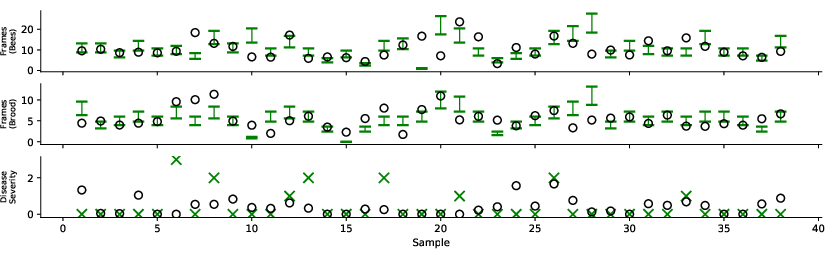

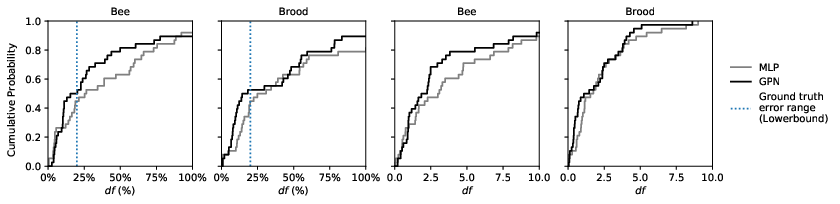

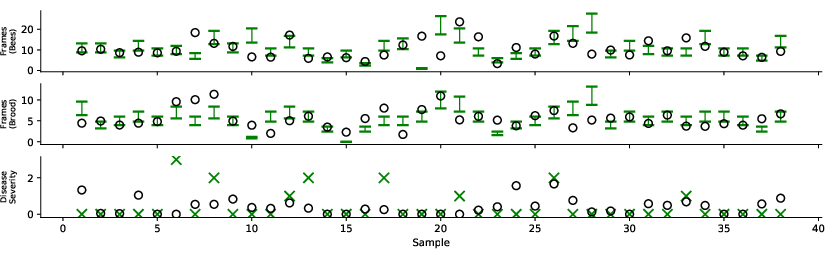

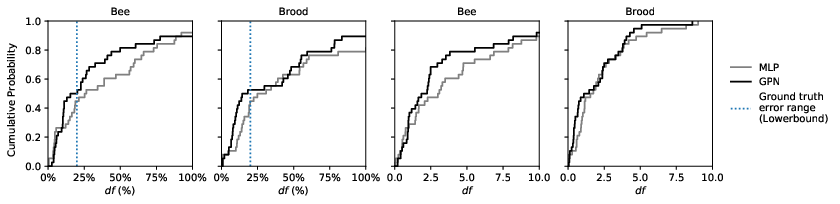

The GPN demonstrated proficient predictive accuracy for multiple tasks—frame counts and disease severity—transcending traditional MLPs using FFT features (Table 1). The visual embedding analysis revealed consistent disease severity segregation within latent spaces, associated with distinct audio spectral profiles (Figures 3 and 4).

Figure 3: Model predictions on combined 10-fold validation set, where each fold validates on 1-3 hives that the model was not trained on.

Figure 4: Frame prediction performance comparing supervised MLP on fft audio features and semi-supervised GPN.

Practical and Theoretical Implications

As a pioneering application of audio-based machine learning in apiculture, this research lays groundwork for real-time, non-invasive hive monitoring systems. The integration of semi-supervised learning amplifies data efficiency, addressing annotation scarcity prevalent in large-scale ecological studies. This methodology holds promise for longitudinal studies across diverse environmental conditions, potentially advancing automated precision agriculture applications.

Conclusion

Through integrative sensor designs and sophisticated audio processing models, this paper contributes to transforming beekeeping practices. This study emphasizes the utility of multimodal data in capturing the nuanced biophysical states of hives. Future work could expand this framework to forecast hive dynamics over longer time scales and incorporate geospatial factors, strengthening ecological stewardship and enhancing food security frameworks.