- The paper presents a novel framework that fuses traditional Bayesian optimization with LLM-driven insights for effective high-dimensional search.

- It employs a Gaussian Process surrogate model and dynamic action strategies to adaptively incorporate contextual domain knowledge.

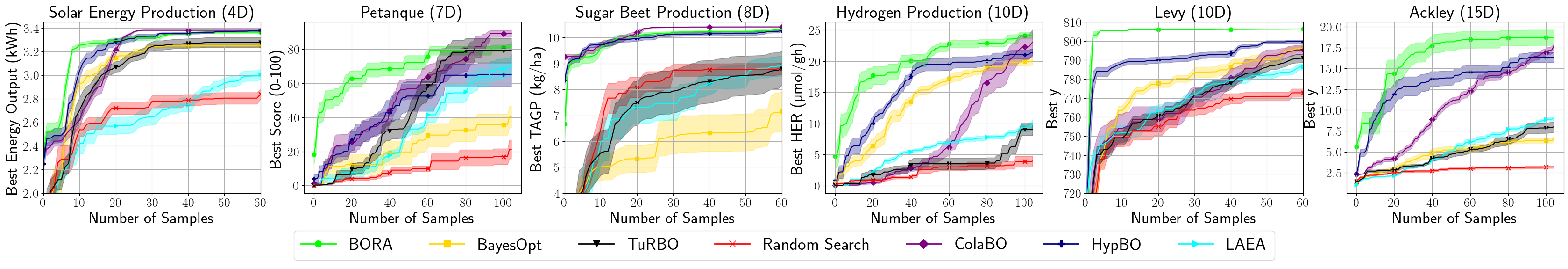

- Experimental results on synthetic and real-world benchmarks demonstrate substantial efficiency gains in optimization tasks.

Language-Based Bayesian Optimization Research Assistant (BORA)

Introduction

The paper "Language-Based Bayesian Optimization Research Assistant (BORA)" (2501.16224) presents a novel framework designed to enhance Bayesian Optimization (BO) for complex scientific problems. BORA integrates LLMs to provide a context-aware optimization approach, addressing the challenges posed by multivariate, high-dimensional searches that are typical in experimental science. The framework adopts an innovative hybrid optimization strategy that dynamically leverages both stochastic inference and domain knowledge insights derived from LLMs to guide the exploration of search spaces effectively.

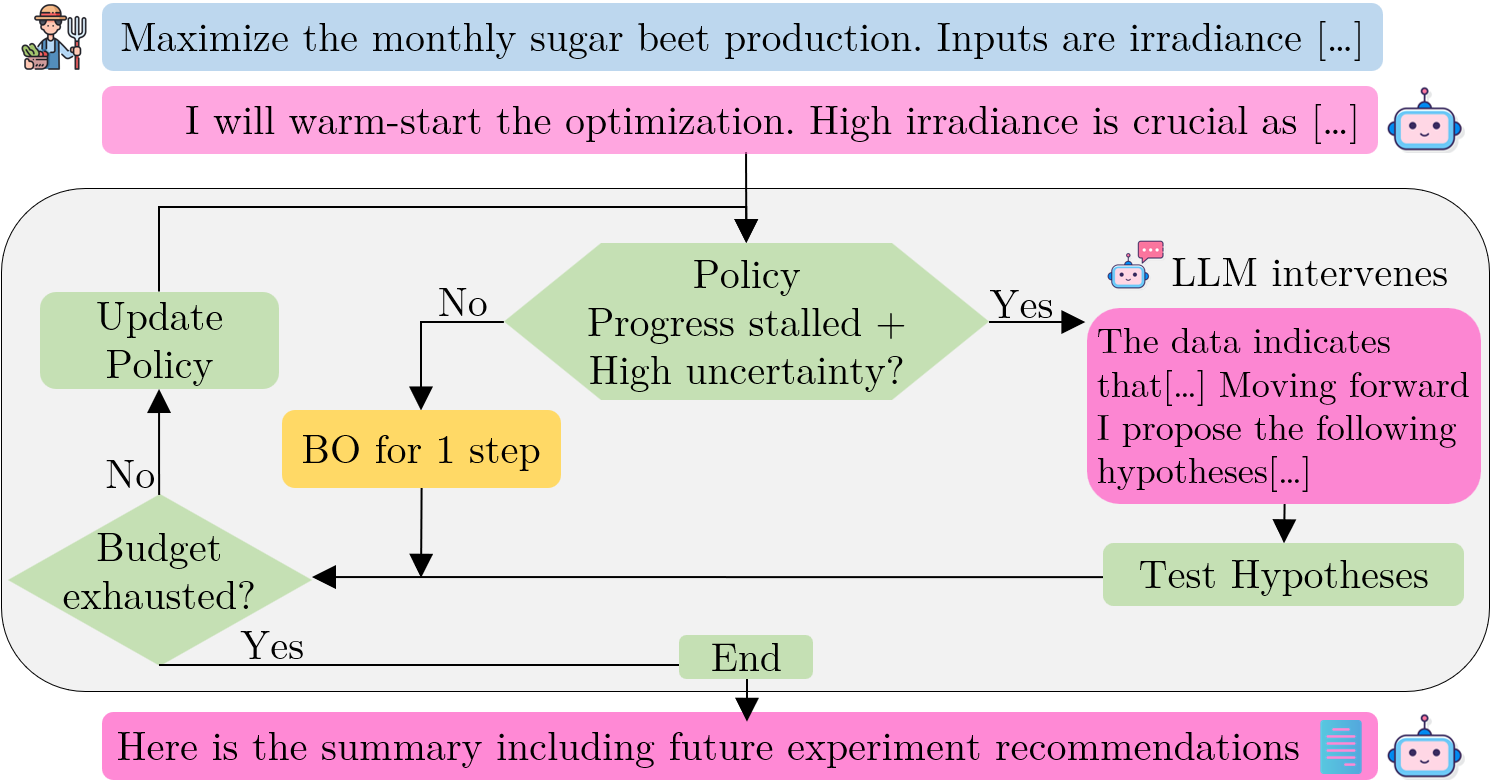

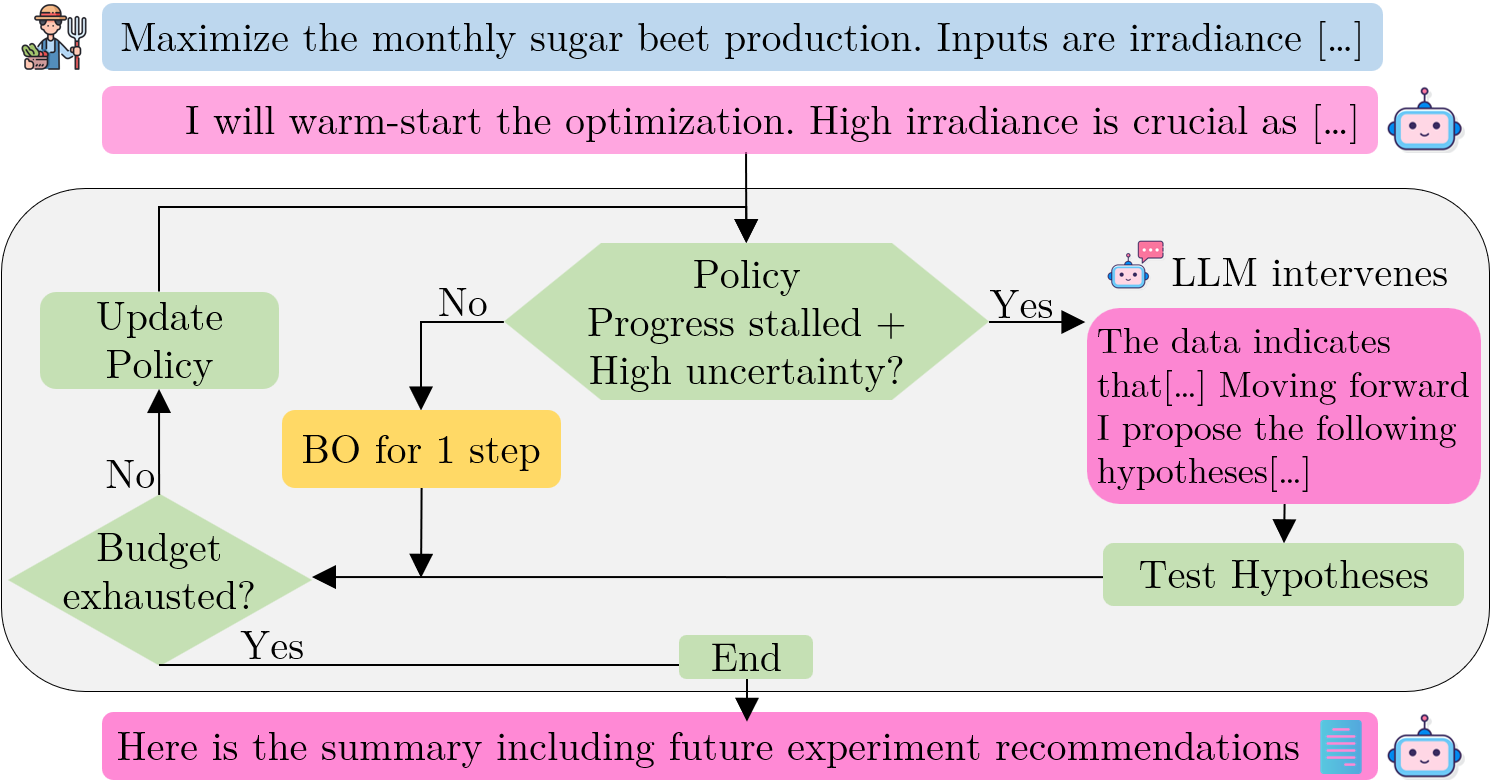

Figure 1: The BORA framework. Icons from~\protect\cite{c:flaticon_icons.

Methodology

BORA combines the strengths of conventional BO, known for its effective design space exploration, with LLMs' capacity to contextualize domain knowledge in optimization tasks. This synergy allows BORA to offer substantial improvements over traditional BO by providing real-time commentary and hypothesis-driven insights to steer the optimization process.

Framework Design

- Surrogate Model: At the core of BORA is a Gaussian Process (GP), serving as a surrogate model to approximate the unknown objective function. The GP is iteratively updated to capture new data and refine predictions.

- Actions and Adaptation: BORA operates through multiple actions: continuing with vanilla BO, LLM-driven suggestions, and LLM-informed BO point selection. These actions adjust dynamically based on the estimated uncertainty and performance plateauing, representing a policy guided by heuristic rules.

- Incorporation of LLMs: LLMs provide contextual insights and suggest hypotheses that are tested within the optimization loop, enhancing exploration when the search space complexity or size makes conventional BO start inefficiently.

Experiments

Extensive experiments validate BORA's capabilities across synthetic and real-world benchmarks, including functions with up to 15 variables and tasks in chemical materials design and solar energy optimization.

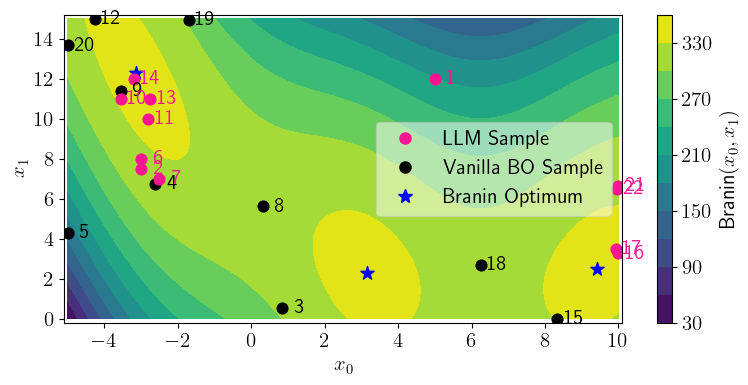

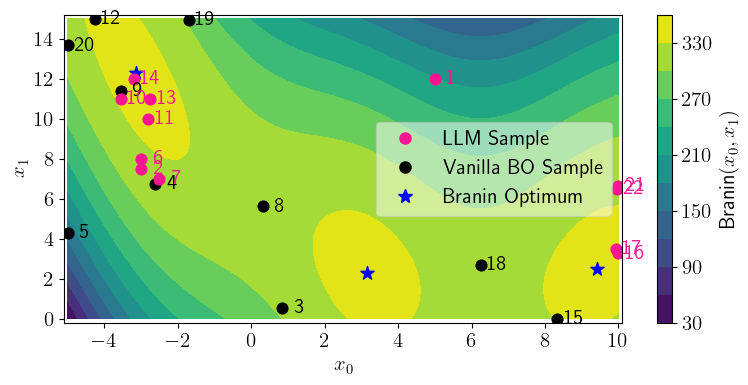

Figure 2: Visualization of BORA maximizing Branin 2D (which contains three global maxima) under a budget of 22 optimization steps (numbered points).

Results

Theoretical and Practical Implications

The introduction of LLMs in the BO framework represents a significant step towards more autonomous and adaptive optimization systems in scientific research. BORA's ability to leverage large-scale textual data and domain-specific insights extends the applicability of BO to complex real-world problems. The framework also establishes a foundation for future developments in incorporating machine learning insights directly into experimental and optimization workflows.

Conclusion

BORA exemplifies a pioneering approach by blending the exploratory strength of Bayesian methods with the reasoning capabilities of LLMs. This work underscores the potential for advanced AI systems to not only assist in experimental design and execution but also to drive substantive gains in efficiency and outcome quality across varied scientific domains. Future research may focus on refining BORA's meta-learning strategies and exploring its application in multi-fidelity and multi-objective optimization scenarios.