- The paper demonstrates that advanced object detection models, YOLOv5 and SSD, can accurately identify Varroa mites and infected bees in real-time.

- YOLOv5 achieved an F1 score of 0.874 and mAP[0.5] of 0.908, outperforming SSD in detecting mite-infested bees.

- The methodology, including custom dataset creation and augmented image analysis, supports scalable and non-invasive bee health monitoring.

Visual Diagnosis of the Varroa Destructor Parasitic Mite Using Object Detection

The study titled "Visual Diagnosis of the Varroa Destructor Parasitic Mite in Honeybees Using Object Detector Techniques" presents an innovative approach to monitor the health state of honeybee colonies through computer vision. The Varroa destructor mite, a critical threat to the Apis mellifera, necessitates continuous monitoring due to its capability to inflict significant harm by transmitting several viruses and causing developmental defects. This paper explores the utilization of state-of-the-art object detection techniques, specifically YOLOv5 and SSD, to facilitate the non-invasive, real-time detection and analysis of bee health status regarding mite infestation.

Background and Objectives

This research builds upon existing bee monitoring techniques, including sound analysis, RFID tracking, and various image processing methods. Conventional methods for Varroa mite detection, such as manual inspection, are labor-intensive and not conducive to real-time, wide-scale application. The primary objective is to assess the feasibility of using advanced object detectors—YOLOv5 and SSD—to identify both the mites and the effects on infected bees, aiming to deploy this system for real-time monitoring.

Methodology

Dataset Collection and Augmentation

A custom dataset of 803 images was compiled, including healthy bees, infected bees, and Varroa mites. The existing datasets did not entirely meet the research needs, prompting the creation of a more comprehensive, annotated dataset leveraging available public resources. The dataset was further expanded through augmentation using the ImgAug framework, enhancing robustness by creating modified versions of images under varied transformations.

Figure 1: Brief overview of the dataset created for the purpose of this work. Healthy bees (green), bees with pollen (yellow), drones (blue), queens (cyan), infected bees (purple), V.-mite (red).

Object Detection Models

YOLOv5

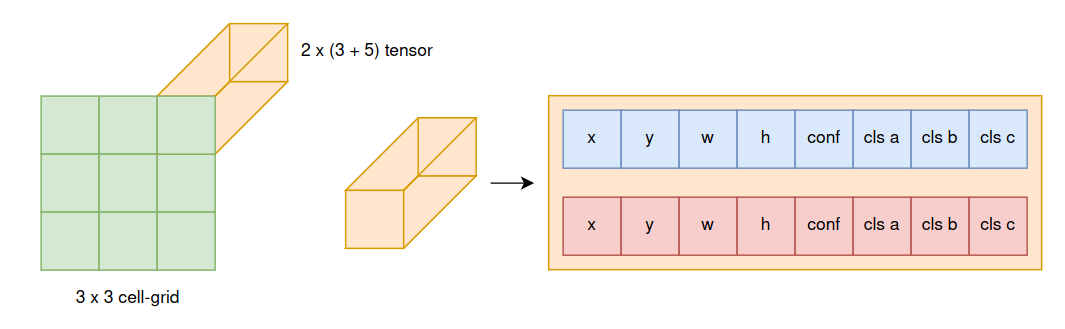

YOLOv5, an evolution of the original YOLO architecture, offers a unified end-to-end approach focusing on speed and precision for real-time applications. The network transforms an input image into a grid, with predictions including bounding box coordinates and object classification.

Figure 2: Image shows a simple example of the single output tensor that represents the 3x3 cell-grid structure for object detection.

SSD

The SSD architecture uses a feature extraction base-net that connects various layer outputs to predict object presence at multiple scales, allowing the simultaneous prediction of different-sized objects within an image.

Evaluation Metrics

To evaluate model performance, metrics such as mAP[0.5], mAP[0.5:0.95], and F1 score were employed. These measures provide comprehensive insights into precision and recall across varied acceptance thresholds, particularly highlighting the models' capability to handle small object detection, like mites.

Results and Analysis

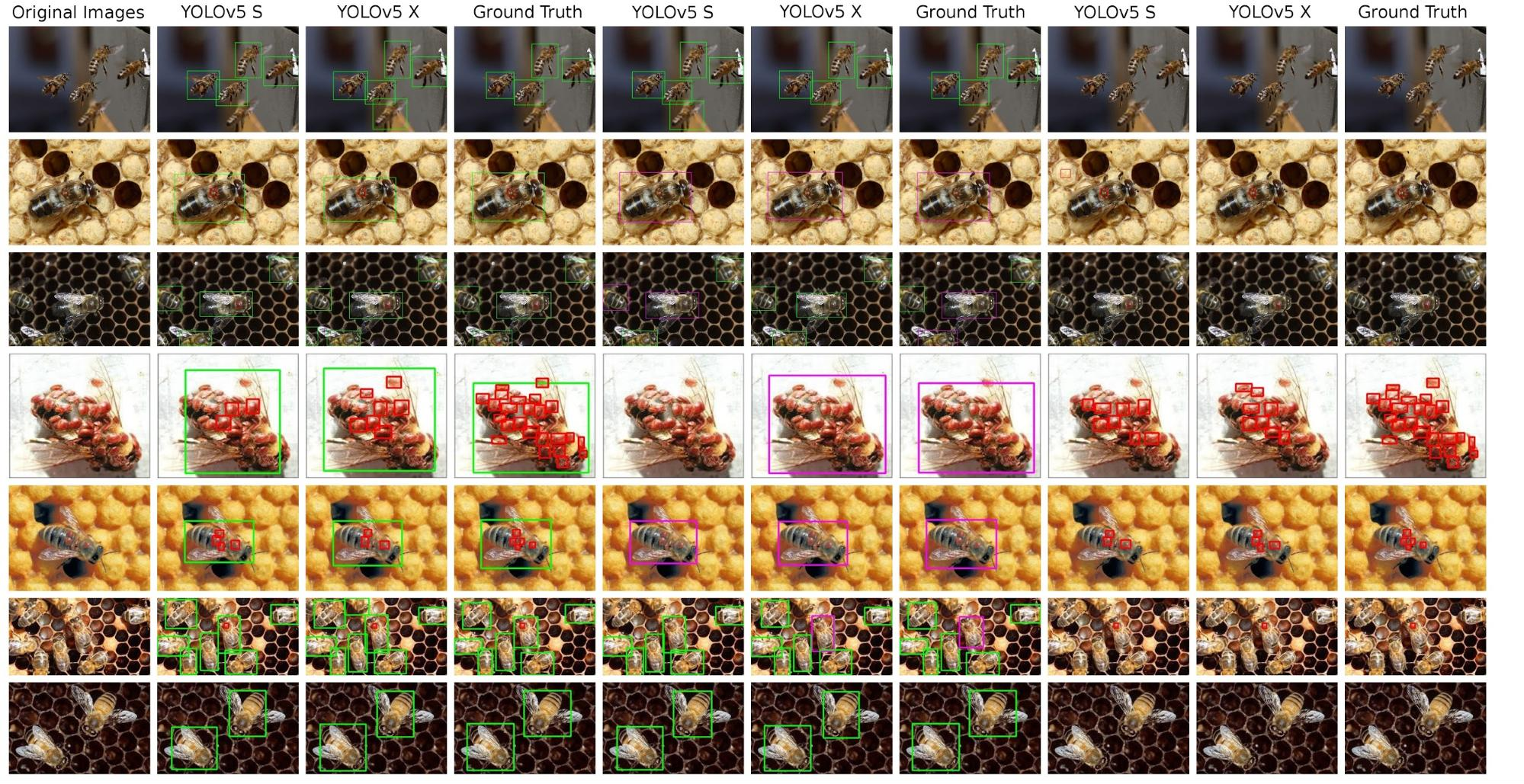

The models were evaluated using a segmented dataset approach, testing three configurations: bees and mites, healthy versus infected bees, and mites only. The YOLOv5 models demonstrated superior results, especially in detecting infected bees, with the X variant achieving an F1 score of 0.874 and a mAP[0.5] of 0.908 on the healthy and ill bees dataset.

Figure 3: Overview of the detections performed on test images by YOLO models, showing both model predictions and ground truth for varied training configurations.

SSD, when configured with MobileNetV2, showed promising potential albeit with less accuracy than YOLOv5, suggesting further fine-tuning and optimization of this model could improve its efficacy.

Conclusion

This study confirms the viability of using YOLOv5 and SSD object detection frameworks for real-time health monitoring of honeybee colonies. The success in distinguishing between healthy and mite-affected bees embodies a step forward in non-invasive automated beekeeping practices. Future work will focus on expediting system deployment, refining model parameters for in-situ applications, and expanding the approach to monitor additional bee colony health aspects. Advancements in this domain could significantly enhance efforts to mitigate the impacts of Varroa destructor and preserve global bee populations.

These findings establish a foundation for practical, scalable bee health inspection systems, potentially influencing broader applications in agricultural monitoring and automated ecological assessments.